Reset Filters

Disruption

- 2022

- 2021

- 2020

- 2019

- 2018

- 2017

- 2016

RMS HWind Hurricane Forecasting and Response and ExposureIQ: Exposure Management Without the Grind

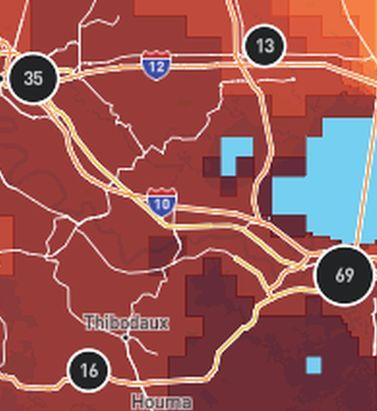

Accessing data in real-time to assess and manage an insurance carrier’s potential liabilities from a loss event remains the holy grail for exposure management teams and is high on a business’ overall wish list A 2021 PwC Pulse Survey of U.S. risk management leaders found that risk executives are increasingly taking advantage of “tech solutions for real-time and automated processes, including dynamic risk monitoring (30 percent), new risk management tech solutions (25 percent), data analytics (24 percent) [and] integrated risk management tools on a single platform (19 percent)”. PwC suggested that as part of an organization’s wider digital and business transformation process, risk management teams should therefore: “use technologies that work together, draw on common data sources, build enterprise-wide analytics and define common sets of metrics.” Separately, Deloitte’s 2021 third-party risk management (TPRM) survey found that 53 percent of respondents across a range of industry sectors wanted to improve real-time information, risk metrics, and reporting in their organizations. With the pandemic providing the unlikely backdrop for driving innovation across the business world, the Deloitte survey explained the statistic with the suggestion that one impact of COVID-19 “has been a greater need for real-time continuous assessment and alerts, rather than traditional point-in-time third-party assessment.” Event Forecasting and Response with HWind and ExposureIQ Natural catastrophe events are a risk analytics flash point. And while growing board-level awareness of the importance of real-time reporting might seem like a positive, without marrying the data with the right tools to gather and process that data, together with a more integrated approach to risk management and modeling functions, the pain points for exposure managers on the event frontline, are unlikely to be relieved. RMS® ExposureIQ™ is an exposure management application available on the cloud-native RMS Intelligent Risk Platform™, which enables clients to centralize exposure data, process it, write direct reports and then run deterministic scenarios to quickly and accurately assess their exposure. When an event is threatening or impacts risks, an exposure management team needs to rapidly process the available data to work out their overall exposure and the likely effect on insured assets. The integration of event response data such as HWind into the ExposureIQ application is where the acquisition of this hazard data really starts to make a difference. The 2022 North Atlantic hurricane season, for example, is upon us, and access to regular, real-time data is relied upon as a crucial part of event response to tropical cyclones. With reliable event response analytics, updated in real-time, businesses can get fully prepared and ensure solvency through additional reinsurance cover, more accurately reserve funds, and confidently communicate risk to all stakeholders. The National Oceanic and Atmospheric Administration’s (NOAA) National Hurricane Center (NHC) has long been viewed as a valuable resource for forecasts on the expected track and severity of hurricanes. However, according to Callum Higgins, product manager, global climate, at RMS, “There are some limitations with what you get [from the NHC]. Forecasts lack detailed insights into the spatial variability of hazard severity and while uncertainty is accounted for, this is based on historical data rather than the forecast uncertainty specific to the storm. Hurricane Henri in 2021 was a good example of this. While the ultimate landfall location fell outside the NHC ‘cone of uncertainty’ four days in advance of landfall, given the large model uncertainty in the track for Henri, HWind forecasts were able to account for this possibility.” Introducing HWind RMS HWind provides observation-based tropical cyclone data for both real-time and historical events and was originally developed as a data service for the NHC by renowned meteorologist Dr. Mark Powell. It combines the widest set of observations for a particular storm in order to create the most accurate representation of its wind field. Since RMS acquired HWind in 2015, it has continually evolved as a solution that can be leveraged more easily by insurers to benefit individual use cases. HWind provides snapshots (instantaneous views of the storm’s wind field) and cumulative footprints (past swaths of the maximum wind speeds) every six hours. In addition, RMS delivers hurricane forecast data that includes a series of forecast scenarios of both the wind and surge hazard, enabling users to understand the potential severity of the event up to five days in advance of landfall. “Because HWind real-time products are released up to every six hours, you can adapt your response as forecasts shift. After an event has struck you very quickly get a good view of which areas have been impacted and to what level of severity,” explains Higgins. The level of detail is another key differentiator. In contrast to the NHC forecasts, which do not include a detailed wind field, HWind provides much more data granularity, with forecast wind and surge scenarios developed by leveraging the RMS North Atlantic Hurricane Models. Snapshots and cumulative footprints, meanwhile, represent the wind field on a 1x1 kilometer grid. And while the NHC does provide uncertainty metrics in its forecasts, such as the “cone of uncertainty” around where the center of the storm will track, these are typically based on historical statistics. “HWind accounts for the actual level of model convergence for a particular storm. That provides you with the insights you need to make decisions around how much confidence to place in each forecast, including whether a more conservative approach is required in cases of heightened uncertainty,” Higgins explains. HWind’s observational approach and access to more than 30 data sources, some of which are exclusive to RMS, means users are better able to capture a particular wind field and apply that data across a wide variety of use cases. Some HWind clients – most notably, Swiss Re – also use it as a trigger for parametric insurance policies. “That’s a critical component for some of our clients,” says Higgins. “For a parametric trigger, you want to make sure you have as accurate as possible a view of the wind speed experienced at underwritten locations when a hurricane strikes.” Real-time data is only one part of the picture. The HWind Enhanced Archive is a catalog of data – including high-resolution images, snapshots, and footprints from historical hurricanes extending back almost 30 years that can be used to validate historical claims and loss experience. “When we're creating forecasts in real-time, we only have the information of what has come before [in that particular storm],” says Higgins. “With the archive, we can take advantage of the data that comes in after we produce the snapshots and use all of that to produce an enhanced archive to improve what we do in real-time.” Taking the Stress out of Event Response “Event response is quite a stressful time for the insurance industry, because they've got to make business decisions based around what their losses could be,” Higgins adds. “At the time of these live events, there's always increased scrutiny around their exposure and reporting.” HWind has plugged the gap in the market for a tool that can provide earlier, more frequent, and more detailed insights into the potential impact of a hurricane before, during, and following landfall. “The key reason for having HWind available with ExposureIQ is to have it all in one place,” explains Higgins. “There are many different sources of information out there, and during a live event the last thing you want to do is be scrambling across websites trying to see who's released what and then pull it across to your environment, so you can overlay it on your live portfolio of risks. As soon as we release the accumulation footprints, they are uploaded directly into the application, making it faster and more convenient for users to generate an understanding of potential loss for their specific portfolios." RMS applications such as ExposureIQ, and the modeling application Risk Modeler™, all use the same cloud-native Intelligent Risk Platform. This allows for a continuous workflow, allowing users to generate both accumulation analytics as well as modeled losses from the same set of exposure data. During an event, for example, with the seven hurricane scenarios that form part of the HWind data flow, the detailed wind fields and tracks (see Figure below) and the storm surge footprints for each scenario can be viewed on the ExposureIQ application for clients to run accumulations against. The application has a robust integrated mapping service that allows users to view their losses and hot spots on a map, and it also includes the functionality to switch to see the same data distributed in loss tables if that is preferred. “Now that we have both those on view in the cloud, you can overlay the footprint files on top of your exposures, and quickly see it before you even run [the accumulations],” says Higgins. Figure 1: RMS HWind forecast probability of peak gusts greater than 80 miles per hour from Hurricane Ida at 1200UTC August 29, 2021, overlaid on exposure data within the RMS ExposureIQ applicationOne-Stop-Shop This close interaction between HWind and the ExposureIQ application indicates another advantage of the RMS product suite – the use of consistent event response data across the platform so exposure mapping and modeling are all in one place. “The idea is that by having it on the cloud, it is much more performant; you can analyze online portfolios a lot more quickly, and you can get those reports to your board a lot faster than previously,” says Higgins. In contrast to other solutions in the market, which typically use third-party hazard tools and modeling platforms, the RMS suite has a consistent model methodology flowing through the entire product chain. “That's really where the sweet spot of ExposureIQ is – this is all one connected ecosystem,” commented Higgins. “I get my data into ExposureIQ and it is in the same format as Risk Modeler, so I don't need to convert anything. Both products use a consistent financial model too – so you are confident the underlying policy and reinsurance terms are being applied in the same way.” The modular nature of the RMS application ecosystem means that, in addition to hurricane risks, data on perils such as floods, earthquakes, and wildfires are also available – and then processed by the relevant risk modeling tool to give clients insights on their potential losses. “With that indication of where you might expect to experience claims, and how severe those claims might be, you can start to reach out to policyholders to understand if they've been affected.” At this point, clients are then in a good position to start building their claims deployment strategy, preparing claims adjusters to visit impacted sites and briefing reserving and other teams on when to start processing payments. But even before a hurricane has made landfall, clients can make use of forecast wind fields to identify locations that might be affected in advance of the storm and warn policyholders to prepare accordingly. “That can not only help policyholders to protect their property but also mitigate insurance losses as well,” says Higgins. “Similarly, you can use it to apply an underwriting moratorium in advance of a storm. Identify areas that are likely to be impacted, and then feed that into underwriting processes to ensure that no one can write a policy in the region when a storm is approaching.” The First Unified Risk Modeling Platform Previously, before moving to an integrated, cloud-based platform, these tools would likely have been hosted using on-premises servers with all the significant infrastructure costs that implies. Now, in addition to accessing a full suite of products via a single cloud-native platform, RMS clients can also leverage the company’s three decades of modeling expertise, benefiting from a strong foundation of trusted exposure data to help manage their exposures. “A key goal for a lot of people responding to events is initially to understand what locations are affected, how severely they're affected, and what their total exposed limit is, and to inform things like deploying claims adjusters,” says Higgins. And beyond the exposure management function, argues Higgins, it’s about gearing up for the potential pain of those claims, the processes that go around that, and the communication to the board. “These catastrophic events can have a significant impact on a company’s revenue, and the full implications – and any potential mitigation – needs to be well understood.” Find out more about the award-winning ExposureIQ.

This Changes Everything

At Exceedance 2020, RMS explored the key forces currently disrupting the industry, from technology, data analytics and the cloud through to rising extremes of catastrophic events like the pandemic and climate change. This coupling of technological and environmental disruption represents a true inflection point for the industry. EXPOSURE asked six experts across RMS for their views on why they believe these forces will change everything Cloud Computing: Moe Khosravy, Executive Vice President, Software and Platforms How are you seeing businesses transition their workloads over to the cloud? I have to say it’s been remarkable. We’re way past basic conversations on the value proposition of the cloud to now having deep technical discussions that are truly transformative plays. Customers are looking for solutions that seamlessly scale with their business and platforms that lower their cost of ownership while delivering capabilities that can be consumed from anywhere in the world. Why is the cloud so important or relevant now? It is now hard for a business to beat the benefits that the cloud offers and getting harder to justify buying and supporting complex in-house IT infrastructure. There is also a mindset shift going on — why is an in-house IT team responsible for running and supporting another vendor’s software on their systems if the vendor itself can provide that solution? This burden can now be lifted using the cloud, letting the business concentrate on what it does best. Has the pandemic affected views of being in the cloud? I would say absolutely. We have always emphasized the importance of cloud and true SaaS architectures to enable business continuity — allowing you to do your work from anywhere, decoupled from your IT and physical footprint. Never has the importance of this been more clearly underlined than during the past few months. Risk Analytics: Cihan Biyikoglu, Executive Vice President, Product What are the specific industry challenges that risk analytics is solving or has the potential to solve? Risk analytics really is a wide field, but in the immediate short term one of the focus areas for us is improving productivity around data. So much time is spent by businesses trying to manually process data — cleansing, completing and correcting data — and on conversion between incompatible datasets. This alone is a huge barrier just to get a single set of results. If we can take this burden away, give decision-makers the power to get results in real time with automated and efficient data handling, then with that I believe we will liberate them to use the latest insights to drive business results. Another important innovation here are the HD Models™. The power of the new engine with its improved accuracy I believe is a game changer that will give our customers a competitive edge. How will risk analytics impact activities and capabilities within the market? As seen in other industries, the more data you can combine, the better the analytics become — that’s the universal law of analytics. Getting all of this data on a unified platform and combining different datasets unearths new insights, which could produce opportunities to serve customers better and drive profit or growth. What are the longer-term implications for risk analytics? In my view, it’s about generating more effective risk insights from analytics, results in better decision- making and the ability to explore new product areas with more confidence. It will spark a wave of innovation to profitably serve customers with exciting products and understand the risk and cost drivers more clearly. How is RMS capitalizing on risk analytics? At RMS, we have the pieces in place for clients to accelerate their risk analytics with the unified, open platform, Risk Intelligence™, which is built on a Risk Data Lake™ in the cloud and is ready to take all sources of data and unearth new insights. Applications such as Risk Modeler™ and ExposureIQ™ can quickly get decision-makers to the analytics they need to influence their business. Open Standards: Dr. Paul Reed, Technical Program Manager, RDOS Why are open standards so important and relevant now? I think the challenges of risk data interoperability and supporting new lines of business have been recognized for many years, as companies have been forced to rework existing data standards to try to accommodate emerging risks and to squeeze more data into proprietary standards that can trace their origins to the 1990s. Today, however, with the availability of big data technology, cloud platforms such as RMS Risk Intelligence and standards such as the Risk Data Open Standard™ (RDOS) allow support for high-resolution risk modeling, new classes of risk, complex contract structures and simplified data exchange. Are there specific industry challenges that open standards are solving or have the potential to solve? I would say that open standards such as the RDOS are helping to solve risk data interoperability challenges, which have been hindering the industry, and provide support for new lines of business. In the case of the RDOS, it’s specifically designed for extensibility, to create a risk data exchange standard that is future-proof and can be readily modified and adapted to meet both current and future requirements. Open standards in other industries, such as Kubernetes, Hadoop and HTML, have proven to be catalysts for collaborative innovation, enabling accelerated development of new capabilities. How is RMS responding to and capitalizing on this development? RMS contributed the RDOS to the industry, and we are using it as the data framework for our platform called Risk Intelligence. The RDOS is free for anyone to use, and anyone can contribute updates that can expand the value and utility of the standard — so its development and direction is not dependent on a single vendor. We’ve put in place an independent steering committee to guide the development of the standard, currently made up of 15 companies. It provides benefits to RMS clients not only by enhancing the new RMS platform and applications, but also by enabling other industry users who create new and innovative products and address new and emerging risk classes. Pandemic Risk: Dr. Gordon Woo, Catastrophist How does pandemic risk affect the market? There’s no doubt that the current pandemic represents a globally systemic risk across many market sectors, and insurers are working out both what the impact from claims will be and the impact on capital. For very good reasons, people are categorizing the COVID-19 disease as a game-changer. However, in my view, SARS [severe acute respiratory syndrome] in 2003, MERS [Middle East respiratory syndrome] in 2012 and Ebola in 2014 should also have been game-changers. Over the last decade alone, we have seen multiple near misses. It’s likely that suppression strategies to combat the coronavirus will probably continue in some form until a vaccine is developed, and governments must strike this uneasy balance between their economies and the opening of their populations to exposure from the virus. What are the longer-term implications of this current pandemic for the industry? It’s clear that the mitigation of pandemic risk will need to be prioritized and given far more urgency than before. There’s no doubt in my mind that events such as the 2014 Ebola crisis were a missed opportunity for new initiatives in pandemic risk mitigation. Away from the life and health sector, all insurers will need to have a better grasp on future pandemics, after seeing the impact of COVID-19 and its wide business impact. The market could look to bold initiatives with governments to examine how to cover future pandemics, similar to how terror attacks are covered as a pooled risk. How is RMS helping its clients in relation to COVID-19? Since early January when the first cases emerged from Wuhan, China, we’ve been supporting our clients and the wider market in gaining a better understanding of the diverse loss implications of COVID-19. Our LifeRisks® team has been actively assisting in pandemic risk management, with regular communications and briefings, and will incorporate new perspectives from COVID-19 into our infectious diseases modeling. Climate Change: Ryan Ogaard, Senior Vice President, Model Product Management Why is climate change so relevant to the market now? There are many reasons. Insurers and their stakeholders are looking at the constant flow of catastrophes, from the U.S. hurricane season of 2017, wildfires in California and bushfires in Australia, to recent major typhoons and wondering if climate change is driving extreme weather risk, and what it could do in the future. They’re asking whether the current extent of climate change risk is priced into their premiums. Regulators are also beginning to conduct stress tests on the potential impact of climate change in the future, and insurers must respond. How will climate change impact how the market operates? Similar to any risk, insurers need to understand and quantify how the physical risk of climate change will impact their portfolios and adjust their strategy accordingly. Also, over the coming years it appears likely that regulators will incorporate climate change reporting into their regimes. Once an insurer understands their exposure to climate change risk, they can then start to take action — which will impact how the market operates. These actions could be in the form of premium changes, mitigating actions such as supporting physical defenses, diversifying the risk or taking on more capital. How is RMS responding to market needs around climate change? RMS is listening to the needs of clients to understand their pain points around climate change risk, what actions they are taking and how we can add value. We’re working with a number of clients on bespoke studies that modify the current view of risk to project into the future and/or test the sensitivity of current modeling assumptions. We’re also working to help clients understand the extent to which climate change is already built into risk models, to educate clients on emerging climate change science and to explain whether there is or isn’t a clear climate change signal for a particular peril. Cyber: Dr. Christos Mitas, Vice President, Model Development How is this change currently manifesting itself? While cyber risk itself is not new, for anyone involved in protecting or insuring organizations against cyberattacks, they will know that the nature of cyber risk is forever evolving. This could involve changes in those perpetrating the attacks, from lone wolf criminals to state-backed actors or the type of target from an unpatched personal computer to a power-plant control system. If you take the current COVID-19 pandemic, this has seen cybercriminals look to take advantage of millions of employees working from home or vulnerable business IT infrastructure. Change to the threat landscape is a constant for cyber risk. Why is cyber risk so important and relevant right now? Simply because new cyber risks emerge, and insurers who are active in this area need to ensure they are ahead of the curve in terms of awareness and have the tools and knowledge to manage new risks. There have been systemic ransomware attacks over the last few years, and criminals continue to look for potential weaknesses in networked systems, third-party software, supply chains — all requiring constant vigilance. It’s this continual threat of a systemic attack that requires insurers to use effective tools based on cutting-edge science, to capture the latest threats and identify potential risk aggregation. How is RMS responding to market needs around cyber risk? With our latest RMS Cyber Solutions, which is version 4.0, we’ve worked closely with clients and the market to really understand the pain points within their businesses, with a wealth of new data assets and modeling approaches. One area is the ability to know the potential cyber risk of the type of business you are looking to insure. In version 4.0, we have a database of over 13 million businesses that can help enrich the information you have about your portfolio and prospective clients, which then leads to more prudent and effective risk modeling. A Time to Change Our industry is undergoing a period of significant disruption on multiple fronts. From the rapidly evolving exposure landscape and the extraordinary changes brought about by the pandemic to step-change advances in technology and seismic shifts in data analytics capabilities, the market is undergoing an unparalleled transition period. As Exceedance 2020 demonstrated, this is no longer a time for business as usual. This is what defines leaders and culls the rest. This changes everything.

Climate Change: The Cost of Inaction

With pressure from multiple directions for a change in the approach to climate risk, how the insurance industry responds is under scrutiny Severe threats to the climate account for all of the top long-term risks in this year’s World Economic Forum (WEF) “Global Risks Report.” For the first time in the survey’s 10-year outlook, the top five global risks in terms of likelihood are all environmental. From an industry perspective, each one of these risks has potentially significant consequences for insurance and reinsurance companies: Extreme weather events with major damage to property, infrastructure and loss of human life Failure of climate change mitigation and adaptation by governments and businesses Man-made environmental damage and disasters including massive oil spills and incidents of radioactive contamination Major biodiversity loss and ecosystem collapse (terrestrial or marine) with irreversible consequences for the environment, resulting in severely depleted resources for humans as well as industries Major natural disasters such as earthquakes, tsunamis, volcanic eruptions and geomagnetic storms “There is mounting pressure on companies from investors, regulators, customers and employees to demonstrate their resilience to rising climate volatility,” says John Drzik, chairman of Marsh and McLennan Insights. “Scientific advances mean that climate risks can now be modeled with greater accuracy and incorporated into risk management and business plans. High-profile events, like recent wildfires in Australia and California, are adding pressure on companies to take action on climate risk.” There is mounting pressure on companies from investors, regulators, customers and employees to demonstrate their resilience to rising climate volatility” John Drzik Marsh and McLennan Insights In December 2019, the Bank of England introduced new measures for insurers, expecting them to assess, manage and report on the financial risks of climate change as part of the bank’s 2021 Biennial Exploratory Scenario (BES) exercise. The BES builds on the Prudential Regulatory Authority’s Insurance Stress Test 2019, which asked insurers to stress test their assets and liabilities based on a series of future climate scenarios. The Network for the Greening of the Financial System shows how regulators in other countries are moving in a similar direction. “The BES is a pioneering exercise, which builds on the considerable progress in addressing climate-related risks that has already been made by firms, central banks and regulators,” said outgoing Bank of England governor Mark Carney. “Climate change will affect the value of virtually every financial asset; the BES will help ensure the core of our financial system is resilient to those changes.” The insurance industry’s approach to climate change is evolving. Industry-backed groups such as ClimateWise have been set up to respond to the challenges posed by climate change while also influencing policymakers. “Given the continual growth in exposure to natural catastrophes, insurance can no longer simply rely on a strategy of assessing and re-pricing risk,” says Maurice Tulloch, former chair of ClimateWise and CEO of international insurance at Aviva. “Doing so threatens a rise of uninsurable markets.” The Cost of Extreme Events In the past, property catastrophe (re)insurers were able to recalibrate their perception of natural catastrophe risk on an annual basis, as policies came up for renewal, believing that changes to hazard frequency and/or severity would occur incrementally over time. However, it has become apparent that some natural hazards have a much greater climate footprint than had been previously imagined. Attribution studies are helping insurers and other stakeholders to measure the financial impact of climate change on a specific event. “You have had events in the last few years that have a climate change signature to them,” says Robert Muir-Wood, chief research officer of science and technology at RMS. “That could include wildfire in California or extraordinary amounts of rainfall during Hurricane Harvey over Houston, or the intensity of hurricanes in the Caribbean, such as Irma, Maria and Dorian. “These events appear to be more intense and severe than those that have occurred in the past,” he continues. “Attribution studies are corroborating the fact that these natural disasters really do have a climate change signature. It was a bit experimental to start with, but now it’s just become a regular part of the picture, that after every event a designated attribution study program will be undertaken … often by more than one climate lab. “In the past it was a rather futile argument whether or not an event had a greater impact because of climate change, because you couldn’t really prove the point,” he adds. “Now it’s possible to say not only if an event has a climate change influence, but by how much. The issue isn’t whether something was or was not climate change, it’s that climate change has affected the probability of an event like that by this amount. That is the nature of the conversation now, which is an intelligent way of thinking about it.” Now it’s possible to say not only if an event has a climate change influence, but by how much. The issue isn’t whether something was or was not climate change, it’s that climate change has affected the probability of an event like that by this amount Robert Muir-Wood RMS Record catastrophe losses in 2017 and 2018 — with combined claims costing insurers US$230 billion, according to Swiss Re sigma — have had a significant impact on the competitive and financial position of many property catastrophe (re)insurers. The loss tally from 2019 was less severe, with global insurance losses below the 10-year average at US$56 billion, but Typhoons Faxai and Hagibis caused significant damage to Japan when they occurred just weeks apart in September and October. “It can be argued that the insurance industry is the only sector that is going to be able to absorb the losses from climate change,” adds Muir-Wood. “Companies already feel they are picking up losses in this area and it’s a bit uncharted — you can’t just use the average of history. It doesn’t really work anymore. So, we need to provide the models that give our clients the comfort of knowing how to handle and price climate change risks in anticipation.” The Cost of Short-Termism While climate change is clearly on the agenda of the boards of international insurance and reinsurance firms, its emphasis differs from company to company, according to the Geneva Association. In a report, the industry think tank found that insurers are hindered from scaling up their contribution to climate adaptation and mitigation by barriers that are imposed at a public policy and regulatory level. The need to take a long-term view on climate change is at odds with the pressures that insurance companies are under as public and regulated entities. Shareholder expectations and the political demands to keep insurance rates affordable are in conflict with the need to charge a risk-adjusted price or reduce exposures in regions that are highly catastrophe exposed. Examples of this need to protect property owners from full risk pricing became an election issue in the Florida market when state-owned carrier Florida Citizens supported customers with effectively subsidized premiums. The disproportionate emphasis on using the historical record as a means of modeling the probability of future losses is a further challenge for the private market operating in the state. “In the past when insurers were confronted with climate change, they were comfortable with the sense that they could always put up the price or avoid writing the business if the risk got too high,” says Muir-Wood. “But I don’t think that’s a credible position anymore. We see situations, such as in California, where insurers are told they should already have priced in climate change risk and they need to use the average of the last 30 years, and that’s obviously a challenge for the solvency of insurers. Regulators want to be up to speed on this. If levels of risk are increasing, they need to make sure that (re)insurance companies can remain solvent. That they have enough capital to take on those risks. “The Florida Insurance Commissioner’s function is more weighted to look after the interests of consumers around insurance prices, and they maintain a very strong line that risk models should be calibrated against the long-term historical averages,” he continues. “And they’ve said that both in Florida for hurricane and in California for wildfire. And in a time of change and a time of increased risk, that position is clearly not in the interest of insurers, and they need to be thinking carefully about that. “Regulators want to be up to speed on this,” he adds. “If levels of risk are increasing, they need to make sure that (re)insurance companies can remain solvent. That they have enough capital to take on those risks. And supervisors will expect the companies they regulate to turn up with extremely good arguments and a demonstration of the data behind their position as to how they are pricing their risk and managing their portfolios.” The Reputational Cost of Inaction Despite the persistence of near-term pressures, a lack of action and a long-term view on climate change is no longer a viable option for the industry. In part, this is due to a mounting reputational cost. European and Australian (re)insurers have, for instance, been more proactive in divesting from fossil fuels than their American and Asian counterparts. This is expected to change as negative attention mounts in both mainstream and social media. The industry’s retreat from coal is gathering pace as public pressure on the fossil fuel industry and its supporters grows. The number of insurers withdrawing cover for coal more than doubled in 2019, with coal exit policies announced by 17 (re)insurance companies. “The role of insurers is to manage society’s risks — it is their duty and in their own interest to help avoid climate breakdown,” says Peter Bosshard, coordinator of the Unfriend Coal campaign. “The industry’s retreat from coal is gathering pace as public pressure on the fossil fuel industry and its supporters grows.” The influence of climate change activists such as Greta Thunberg, the actions of NGO pressure groups like Unfriend Coal and growing climate change disclosure requirements are building a critical momentum and scrutiny into the action (or lack thereof) taken by insurance senior management. “If you are in the driver’s seat of an insurance company and you know your customers’ attitudes are shifting quite fast, then you need to avoid looking as though you are behind the curve,” says Muir-Wood. “Quite clearly there is a reputational side to this. Attitudes are changing, and as an industry we should anticipate that all sorts of things that are tolerated today will become unacceptable in the future.” To understand your organization’s potential exposure to climate change contact the RMS team here

Breaking Down the Pandemic

As COVID-19 has spread across the world and billions of people are on lockdown, EXPOSURE looks at how the latest scientific data can help insurers better model pandemic risk The coronavirus disease 2019 (COVID-19) was declared a pandemic by the World Health Organization (WHO) on March 11, 2020. In a matter of months, it has expanded from the first reported cases in the city of Wuhan in Hubei province, China, to confirmed cases in over 200 countries around the globe. At the time of writing, approximately one-third of the world’s population is in some form of lockdown, with movement and activities restricted in an effort to slow the disease’s spread. The transmissibility of COVID-19 is truly global, with even the extreme remoteness of location proving no barrier to its relentless progression as it reaches far-flung locations such as Papua New Guinea and Timor-Leste. After declaring the event a global pandemic, Dr. Tedros Adhanom Ghebreyesus, WHO director general, said: “We have never before seen a pandemic sparked by a coronavirus. This is the first pandemic caused by a coronavirus. And we have never before seen a pandemic that can be controlled. … This is not just a public health crisis, it is a crisis that will touch every sector — so every sector and every individual must be involved in the fight.” Ignoring the Near Misses COVID-19 has been described as the biggest global catastrophe since World War II. Its impact on every part of our lives, from the mundane to the complex, will be profound, and its ramifications will be far-reaching and enduring. On multiple levels, the coronavirus has caught the world off guard. So rapidly has it spread that initial response strategies, designed to slow its progress, were quickly reevaluated and more restrictive measures have been required to stem the tide. Yet, some are asking why many nations have been so flat-footed in their response. To find a comparable pandemic event, it is necessary to look back over 100 years to the 1918 flu pandemic, also referred to as Spanish flu. While this is a considerable time gap, the interim period has witnessed multiple near misses that should have ensured countries remained primed for a potential pandemic. “For very good reasons, people are categorizing COVID-19 as a game-changer. However, SARS in 2003 should have been a game-changer, MERS in 2012 should have been a game-changer, Ebola in 2014 should have been a game-changer. If you look back over the last decade alone, we have seen multiple near misses.” Dr. Gordon Woo RMS However, as Dr. Gordon Woo, catastrophist at RMS, explains, such events have gone largely ignored. “For very good reasons, people are categorizing COVID-19 as a game-changer. However, SARS in 2003 should have been a game-changer, MERS in 2012 should have been a game-changer, Ebola in 2014 should have been a game-changer. If you look back over the last decade alone, we have seen multiple near misses. “If you examine MERS, this had a mortality rate of approximately 30 percent — much greater than COVID-19 — yet fortunately it was not a highly transmissible virus. However, in South Korea a mutation saw its transmissibility rate surge to four chains of infection, which is why it had such a considerable impact on the country.” While COVID-19 is caused by a novel virus and there is no preexisting immunity within the population, its genetic makeup shares 80 percent of the coronavirus genes that sparked the 2003 SARS outbreak. In fact, the virus is officially titled “severe acute respiratory syndrome coronavirus 2,” or “SARS-CoV-2.” However, the WHO refers to it by the name of the disease it causes, COVID-19, as calling it SARS could have “unintended consequences in terms of creating unnecessary fear for some populations, especially in Asia which was worst affected by the SARS outbreak in 2003.” “Unfortunately, people do not respond to near misses,” Woo adds, “they only respond to events. And perhaps that is why we are where we are with this pandemic. The current event is well within the bounds of catastrophe modeling, or potentially a lot worse if the fatality ratio was in line with that of the SARS outbreak. “When it comes to infectious diseases, we must learn from history. So, if we take SARS, rather than describing it as a unique event, we need to consider all the possible variants that could occur to ensure we are better able to forecast the type of event we are experiencing now.” Within Model Parameters A COVID-19-type event scenario is well within risk model parameters. The RMS® Infectious Diseases Model within its LifeRisks®platform incorporates a range of possible source infections, which includes coronavirus, and the company has been applying model analytics to forecast the potential development tracks of the current outbreak. Launched in 2007, the Infectious Diseases Model was developed in response to the H5N1 virus. This pathogen exhibited a mortality rate of approximately 60 percent, triggering alarm bells across the life insurance sector and sparking demand for a means of modeling its potential portfolio impact. The model was designed to produce outputs specific to mortality and morbidity losses resulting from a major outbreak. In 2006, H5N1 exhibited a mortality rate of approximately 60 percent, triggering alarm bells across the life insurance sector and sparking demand for a means of modeling its potential portfolio impact The probabilistic model is built on two critical pillars. The first is modeling that accurately reflects both the science of infectious disease and the fundamental principles of epidemiology. The second is a software platform that allows firms to address questions based on their exposure and experience data. “It uses pathogen characteristics that include transmissibility and virulence to compartmentalize a pathological epidemiological model and estimate an abated mortality and morbidity rate for the outbreak,” explains Dr. Brice Jabo, medical epidemiologist at RMS. “The next stage is to apply factors including demographics, vaccines and pharmaceutical and non-pharmaceutical interventions to the estimated rate. And finally, we adjust the results to reflect the specific differences in the overall health of the portfolio or the country to generate an accurate estimate of the potential morbidity and mortality losses.” The model currently spans 59 countries, allowing for differences in government strategy, health care systems, vaccine treatment, demographics and population health to be applied to each territory when estimating pandemic morbidity and mortality losses. Breaking Down the Virus In the case of COVID-19, transmissibility — the average number of infections that result from an initial case — has been a critical model parameter. The virus has a relatively high level of transmissibility, with data showing that the average infection rate is in the region of 1.5-3.5 per initial infection. However, while there is general consensus on this figure, establishing an estimate for the virus severity or virulence is more challenging, as Jabo explains: “Understanding the virulence of the disease enables you to assess the potential burden placed on the health care system. In the model, we therefore track the proportion of mild, severe, critical and fatal cases to establish whether the system will be able to cope with the outbreak. However, the challenge factor is that this figure is very dependent on the number of tests that are carried out in the particular country, as well as the eligibility criteria applied to conducting the tests.” An effective way of generating more concrete numbers is to have a closed system, where everyone in a particular environment has a similar chance of contracting the disease and all individuals are tested. In the case of COVID-19 these closed systems have come in the form of cruise ships. In these contained environments, it has been possible to test all parties and track the infection and fatality rates accurately. Another parameter tracked in the model is non-pharmaceutical intervention — those measures introduced in the absence of a vaccine to slow the progression of the disease and prevent health care systems from being overwhelmed. Suppression strategies are currently the most effective form of defense in the case of COVID-19. They are likely to be in place in many countries for a number of months as work continues on a vaccine. “This is an example of a risk that is hugely dependent on government policy for how it develops,” says Woo. “In the case of China, we have seen how the stringent policies they introduced have worked to contain the first wave, as well as the actions taken in South Korea. There has been concerted effort across many parts of Southeast Asia, a region prone to infectious diseases, to carry out extensive testing, chase contacts and implement quarantine procedures, and these have so far proved successful in reducing the spread. The focus is now on other parts of the world such as Europe and the Americas as they implement measures to tackle the outbreak.” The Infectious Diseases Model’s vaccine and pharmaceutical modifiers reflect improvements in vaccine production capacity, manufacturing techniques and the potential impact of antibacterial resistance. While an effective treatment is, at time of writing, still in development, this does allow users to conduct “what-if” scenarios. “Model users can apply vaccine-related assumptions that they feel comfortable with,” Jabo says. “For example, they can predict potential losses based on a vaccine being available within two months that has an 80 percent effectiveness rate, or an antiviral treatment available in one month with a 60 percent rate.” Data Upgrades Various pathogens have different mortality and morbidity distributions. In the case of COVID-19, evidence to date suggests that the highest levels of mortality from the virus occur in the 60-plus age range, with fatality levels declining significantly below this point. However, recent advances in data relating to immunity levels has greatly increased our understanding of the specific age range exposed to a particular virus. “Recent scientific findings from data arising from two major flu viruses, H5N1 and A/H7N9, have had a significant impact on our understanding of vulnerability,” explains Woo. “The studies have revealed that the primary age range of vulnerability to a flu virus is dependent upon the first flu that you were exposed to as a child. “There are two major flu groups to which everyone would have had some level of exposure at some stage in their childhood. That exposure would depend on which flu virus was dominant at the time they were born, influencing their level of immunity and which type of virus they are more susceptible to in the future. This is critical information in understanding virus spread and we have adapted the age profile vulnerability component of our model to reflect this.” Recent model upgrades have also allowed for the application of detailed information on population health, as Jabo explains: “Preexisting conditions can increase the risk of infection and death, as COVID-19 is demonstrating. Our model includes a parameter that accounts for the underlying health of the population at the country, state or portfolio level. “The information to date shows that people with co-morbidities such as hypertension, diabetes and cardiovascular disease are at a higher risk of death from COVID-19. It is possible, based on this data, to apply the distribution of these co-morbidities to a particular geography or portfolio, adjusting the outputs based on where our data shows high levels of these conditions.” Predictive Analytics The RMS Infectious Diseases Model is designed to estimate pandemic loss for a 12-month period. However, to enable users to assess the potential impact of the current pandemic in real time, RMS has developed a hybrid version that combines the model pandemic scenarios with the number of cases reported. “Using the daily cases numbers issued by each country,” says Jabo, “we project forward from that data, while simultaneously projecting backward from the RMS scenarios. Using this hybrid approach, it allows us to provide a time-dependent estimate for COVID-19. In effect, we are creating a holistic alignment of observed data coupled with RMS data to provide our clients with a way to understand how the evolution of the pandemic is progressing in real time.” Aligning the observed data with the model parameters makes the selection of proper model scenarios more plausible. The forward and backward projections, as illustrated, not only allow for short-term projections, but also forms part of model validation and enables users to derive predictive analytics to support their portfolio analysis. “Staying up to date with this dynamic event is vital,” Jabo concludes, “because the impact of the myriad government policies and measures in place will result in different potential scenarios, and that is exactly what we are seeing happening.”

Insurance: The next 10 years

Mohsen Rahnama, Cihan Biyikoglu and Moe Khosravy of RMS look to 2029, consider the changes the (re)insurance industry will have undergone and explain why all roads lead to a platform Over the last 30 years, catastrophe models have become an integral part of the insurance industry for portfolio risk management. During this time, the RMS model suite has evolved and expanded from the initial IRAS model — which covered California earthquake — to a comprehensive and diverse set of models covering over 100 peril-country combinations all over the world. RMS Risk Intelligence™, an open and flexible platform, was recently launched, and it was built to enable better risk management and support profitable risk selection. Since the earliest versions of catastrophe models, significant advances have been made in both technology and computing power. These advances allow for a more comprehensive application of new science in risk modeling and make it possible for modelers to address key sources of model and loss uncertainty in a more systematic way. These and other significant changes over the last decade are shaping the future of insurance. By 2029, the industry will be fully digitized, presenting even more opportunity for disruption in an era of technological advances. In what is likely to remain a highly competitive environment, market participants will need to differentiate based on the power of computing speed and the ability to mine and extract value from data to inform quick, risk-based decisions. Laying the Foundations So how did we get here? Over the past few decades we have witnessed several major natural catastrophes including Hurricanes Andrew, Katrina and Sandy; the Northridge, Kobe, Maule, Tōhoku and Christchurch Earthquakes; and costly hurricanes and California wildfires in 2017 and 2018. Further, human-made catastrophes have included the terrorist attacks of 9/11 and major cyberattacks, such as WannaCry and NotPetya. Each of these events has changed the landscape of risk assessment, underwriting and portfolio management. Combining the lessons learned from past events, including billions of dollars of loss data, with new technology has enhanced the risk modeling methodology, resulting in more robust models and a more effective way to quantify risk across diverse regions and perils. The sophistication of catastrophe models has increased as technology has enabled a better understanding of root causes and behavior of events, and it has improved analysis of their impact. Technology has also equipped the industry with more sophisticated tools to harness larger datasets and run more computationally intensive analytics. These new models are designed to translate finer-grained data into deeper and more detailed insights. Consequently, we are creating better models while also ensuring model users can make better use of model results through more sophisticated tools and applications. A Collaborative Approach In the last decade, the pace at which technology has advanced is compelling. Emerging technology has caused the insurance industry to question if it is responding quickly and effectively to take advantage of new opportunities. In today’s digital world, many segments of the industry are leveraging the power and capacity enabled by Cloud-computing environments to conduct intensive data analysis using robust analytics. Technology has also equipped the industry with more sophisticated tools to harness larger datasets Such an approach empowers the industry by allowing information to be accessed quickly, whenever it is needed, to make effective, fully informed decisions. The development of a standardized, open platform creates smooth workflows and allows for rapid advancement, information sharing and collaboration in growing common applications. The future of communication between various parties across the insurance value chain — insurers, brokers, reinsurers, supervisors and capital markets — will be vastly different from what it is today. By 2029, we anticipate the transfer of data, use of analytics and other collaborations will be taking place across a common platform. The benefits will include increased efficiency, more accurate data collection and improvements in underwriting workflow. A collaborative platform will also enable more robust and informed risk assessments, portfolio rollout processes and risk transfers. Further, as data is exchanged it will be enriched and augmented using new machine learning and AI techniques. An Elastic Platform We continue to see technology evolve at a very rapid pace. Infrastructure continues to improve as the cost of storage declines and computational speed increases. Across the board, the incremental cost of computing technology has come down. Software tools have evolved accordingly, with modern big data systems now capable of handling hundreds if not thousands of terabytes of data. Improved programming frameworks allow for more seamless parallel programming. User-interface components reveal data in ways that were not possible in the past. Furthermore, this collection of phenomenal advances is now available in the Cloud, with the added benefit that it is continuously self-improving to support growing commercial demands. In addition to helping avoid built-in obsolescence, the Cloud offers “elasticity.” Elasticity means accessing many machines when you need them and fewer when you don’t. It means storage that can dynamically grow and shrink, and computing capacity that can follow the ebb and flow of demand. In our world of insurance and data analytics, the macro cycles of renewal seasons and micromodeling demand bursts can both be accommodated through the elastic nature of the Cloud. In an elastic world, the actual cost of supercomputing goes down, and we can confidently guarantee fast response times. Empowering Underwriters A decade from now, the industry will look very different, not least due to changes within the workforce and the risk landscape. First-movers and fast-followers will be in a position of competitive advantage come 2029 in an industry where large incumbents are already partnering with more agile “insurtech” startups. The role of the intermediary will continue to evolve, and at every stage of risk transfer — from insured to primary insurer, reinsurer and into the capital markets — data sharing and standardization will become key success factors. Over the next 10 years, as data becomes more standardized and more widely shared, the concept of blockchain, or distributed ledger technology, will move closer to becoming a reality. This standardization, collaboration and use of advanced analytics are essential to the future of the industry. Machine learning and AI, highly sophisticated models and enhanced computational power will enable underwriters to improve their risk selection and make quick, highly informed decisions. And this ability will enhance the role of the insurance industry in society, in a changing and altogether riskier world. The tremendous protection gap can only be tackled when there is more detailed insight and differentiation around each individual risk. When there is greater insight into the underlying risk, there is less need for conservatism, risks become more accurately and competitively priced, and (re)insurers are able to innovate to provide products and solutions for new and emerging exposures. Over the coming decade, models will require advanced computing technology to fully harness the power of big data. Underwater robots are now probing previously unmapped ocean waters to detect changes in temperatures, currents, sea level and coastal flooding. Drones are surveying our built-up environment in fine detail. Artificial intelligence and machine learning algorithms are searching for patterns of climate change in these new datasets, and climate models are reconstructing the past and predicting the future at a resolution never before possible. These emerging technologies and datasets will help meet our industry’s insatiable demand for more robust risk assessment at the level of an individual asset. This explosion of data will fundamentally change the way we think about model execution and development, as well as the end-to-end software infrastructure. Platforms will need to be dynamic and forward-looking verses static and historic in the way they acquire, train, and execute on data. The industry has already transformed considerably over the past five years, despite traditionally being considered a laggard in terms of its technology adoption. The foundation is firmly in place for a further shift over the next decade where all roads are leading to a common, collaborative industry platform, where participants are willing to share data and insights and, as they do so, open up new markets and opportunities. RMS Risk Intelligence The analytical and computational power of the Risk Intelligence (RI) platform enables the RMS model development team to bring the latest science and research to the RMS catastrophe peril model suite and build the next generation of high-definition models. The functionality and high performance of RI allows the RMS team to assess elements of model and loss uncertainty in a more robust way than before. The framework of RI is flexible, modular and scalable, allowing the rapid integration of future knowledge with a swifter implementation and update cycle. The open modeling platform allows model users to extract more value from their claims experience to develop vulnerability functions that represent a view of risk specific to their data or to use custom-built alternatives. This enables users to perform a wide range of sensitivity tests and take ownership of their view of risk. Mohsen Rahnama is chief risk modeling officer and executive vice president, models and data, Cihan Biyikoglu is executive vice president, product and Moe Khosravy is executive vice president, software and platform at RMS

Like Moths to the Flame

Why is it that, in many different situations and perils, people appear to want to relocate toward the risk? What is the role of the private insurance and reinsurance industry in curbing their clients’ risk tropism? Florida showed rapid percentage growth in terms of exposure and number of policyholders If the Great Miami Hurricane of 1926 were to occur again today it would result in insurance losses approaching US$200 billion. Even adjusted for inflation, that is hundreds of times more than the US$100 million damage toll in 1926. Over the past 100 years, the Florida coast has developed exponentially, with wealthy individuals drawn to buying lavish coastal properties — and the accompanying wind and storm-surge risks. Since 2000, the number of people living in coastal areas of Florida increased by 4.2 million, or 27 percent, to 19.8 million in 2015, according to the U.S. Census Bureau. This is an example of unintended “risk tropism,” explains Robert Muir-Wood, chief research officer at RMS. Just as the sunflower is a ‘heliotrope’, turning toward the sun, research has shown how humans have an innate drive to live near water, on a river or at the beach, often at increased risk of flood hazards. “There is a very strong human desire to find the perfect primal location for your house. It is something that is built deeply into the human psyche,” Muir-Wood explains. “People want to live with the sound of the sea, or in the forest ‘close to nature,’ and they are drawn to these locations thinking about all the positives and amenity values, but not really understanding or evaluating the accompanying risk factors. “People will pay a lot to live right next to the ocean,” he adds. “It’s an incredibly powerful force and they will invest in doing that, so the price of land goes up by a factor of two or three times when you get close to the beach.” Even when beachfront properties are wiped out in hurricane catastrophes, far from driving individuals away from a high-risk zone, research shows they simply “build back bigger,” says Muir-Wood. “The disaster can provide the opportunity to start again, and wealthier people move in and take the opportunity to rebuild grander houses. At least the new houses are more likely to be built to code, so maybe the reduction in vulnerability partly offsets the increased exposure at risk.” Risk tropism can also be found with the encroachment of high-value properties into the wildlands of California, leading to a big increase in wildfire insurance losses. Living close to trees can be good for mental health until those same trees bring a conflagration. Insurance losses due to wildfire exceeded US$10 billion in 2017 and have already breached US$12 billion for last year’s Camp, Hill and Woolsey Fires, according to the California Department of Insurance. It is not the number of fires that have increased, but the number of houses consumed by the fires. “Insurance tends to stop working when you have levels of risk above one percent […] People are unprepared to pay for it” Robert Muir-Wood RMS Muir-Wood notes that the footprint of the 2017 Tubbs Fire, with claims reaching to nearly US$10 billion, was very similar to the area burned during the Hanley Fire of 1964. The principal difference in outcome is driven by how much housing has been developed in the path of the fire. “If a fire like that arrives twice in one hundred years to destroy your house, then the amount you are going to have to pay in insurance premium is going to be more than 2 percent of the value per year,” he says. “People will think that’s unjustified and will resist it, but actually insurance tends to stop working when you have levels of risk cost above 1 percent of the property value, meaning, quite simply, that people are unprepared to pay for it.” Risk tropism can also be found in the business sector, in the way that technology companies have clustered in Silicon Valley: a tectonic rift within a fast-moving tectonic plate boundary. The tectonics have created the San Francisco Bay and modulate the climate to bring natural air-conditioning. “Why is it that, around the world, the technology sector has picked locations — including Silicon Valley, Seattle, Japan and Taiwan — that are on plate boundaries and are earthquake prone?” asks Muir-Wood. “There seems to be some ideal mix of mountains and water. The Bay Area is a very attractive environment, which has brought the best students to the universities and has helped companies attract some of the smartest people to come and live and work in Silicon Valley,” he continues. “But one day there will be a magnitude 7+ earthquake in the Bay Area that will bring incredible disruption, that will affect the technology firms themselves.” Insurance and reinsurance companies have an important role to play in informing and dissuading organizations and high net worth individuals from being drawn toward highly exposed locations; they can help by pricing the risk correctly and maintaining underwriting discipline. The difficulty comes when politics and insurance collide. The growth of Fair Access to Insurance Requirements (FAIR) plans and beach plans, offering more affordable insurance in parts of the U.S. that are highly exposed to wind and quake perils, is one example of how this function is undermined. At its peak, the size of the residual market in hurricane-exposed states was US$885 billion, according to the Insurance Information Institute (III). It has steadily been reduced, partly as a result of the influx of non-traditional capacity from the ILS market and competitive pricing in the general reinsurance market. However, in many cases the markets-of-last-resort remain some of the largest property insurers in coastal states. Between 2005 and 2009 (following Hurricanes Charley, Frances, Ivan and Jeanne in 2004), the plans in Mississippi, Texas and Florida showed rapid percentage growth in terms of exposure and number of policyholders. A factor fueling this growth, according to the III, was the rise in coastal properties. As long as state-backed insurers are willing to subsidize the cost of cover for those choosing to locate in the riskiest locations, private (re)insurance will fail as an effective check on risk tropism, thinks Muir-Wood. “In California there are quite a few properties that have not been able to get standard fire insurance,” he observes. “But there are state or government-backed schemes available, and they are being used by people whose wildfire risk is considered to be too high.”

Earthquake Risk: New Zealand Insurance Sector Experiences Growing Pains

Speed of change around homeowners insurance is gathering pace as insurers move to differential pricing models New Zealand’s insurance sector is undergoing fundamental change as the impact of the NZ$40 billion (US$27 billion) Canterbury Earthquake and more recent Kaikōura disaster spur efforts to create a more sustainable, risk-reflective marketplace. In 2018, EXPOSURE examined risk-based pricing in the region following Tower Insurance’s decision to adopt such an approach to achieve a “fairer and more equitable way of pricing risk.” Since then, IAG, the country’s largest general insurer, has followed suit, with properties in higher-risk areas forecast to see premium hikes, while it also adopts “a conservative approach” to providing insurance in peril-prone areas. “Insurance, unsurprisingly, is now a mainstream topic across virtually every media channel in New Zealand,” says Michael Drayton, a consultant at RMS. “There has been a huge shift in how homeowners insurance is viewed, and it will take time to adjust to the introduction of risk-based pricing.” Another market-changing development is the move by the country’s Earthquake Commission (EQC) to increase the first layer of buildings’ insurance cover it provides from NZ$100,000 to NZ$150,000 (US$68,000 to US$101,000), while lowering contents cover from NZ$20,000 (US$13,500) to zero. These changes come into force in July 2019. Modeling the average annual loss (AAL) impact of these changes based on the updated RMS New Zealand Earthquake Industry Exposure Database shows the private sector will see a marginal increase in the amount of risk it takes on as the AAL increase from the contents exit outweighs the decrease from the buildings cover hike. These findings have contributed greatly to the debate around the relationship between buildings and contents cover. One major issue the market has been addressing is its ability to accurately estimate sums insured. According to Drayton, recent events have seen three separate spikes around exposure estimates. “The first spike occurred in the aftermath of the Christchurch Earthquake,” he explains, “when there was much debate about commercial building values and limits, and confusion relating to sums insured and replacement values. “The second occurred with the move away from open-ended replacement policies in favor of sums insured for residential properties. “Now that the EQC has removed contents cover, we are seeing another spike as the private market broaches uncertainty around content-related replacement values. “There is very much an education process taking place across New Zealand’s insurance industry,” Drayton concludes. “There are multiple lessons being learned in a very short period of time. Evolution at this pace inevitably results in growing pains, but if it is to achieve a sustainable insurance market it must push on through.”