Pete Dailey of RMS explains why model transparency is critical to client confidence

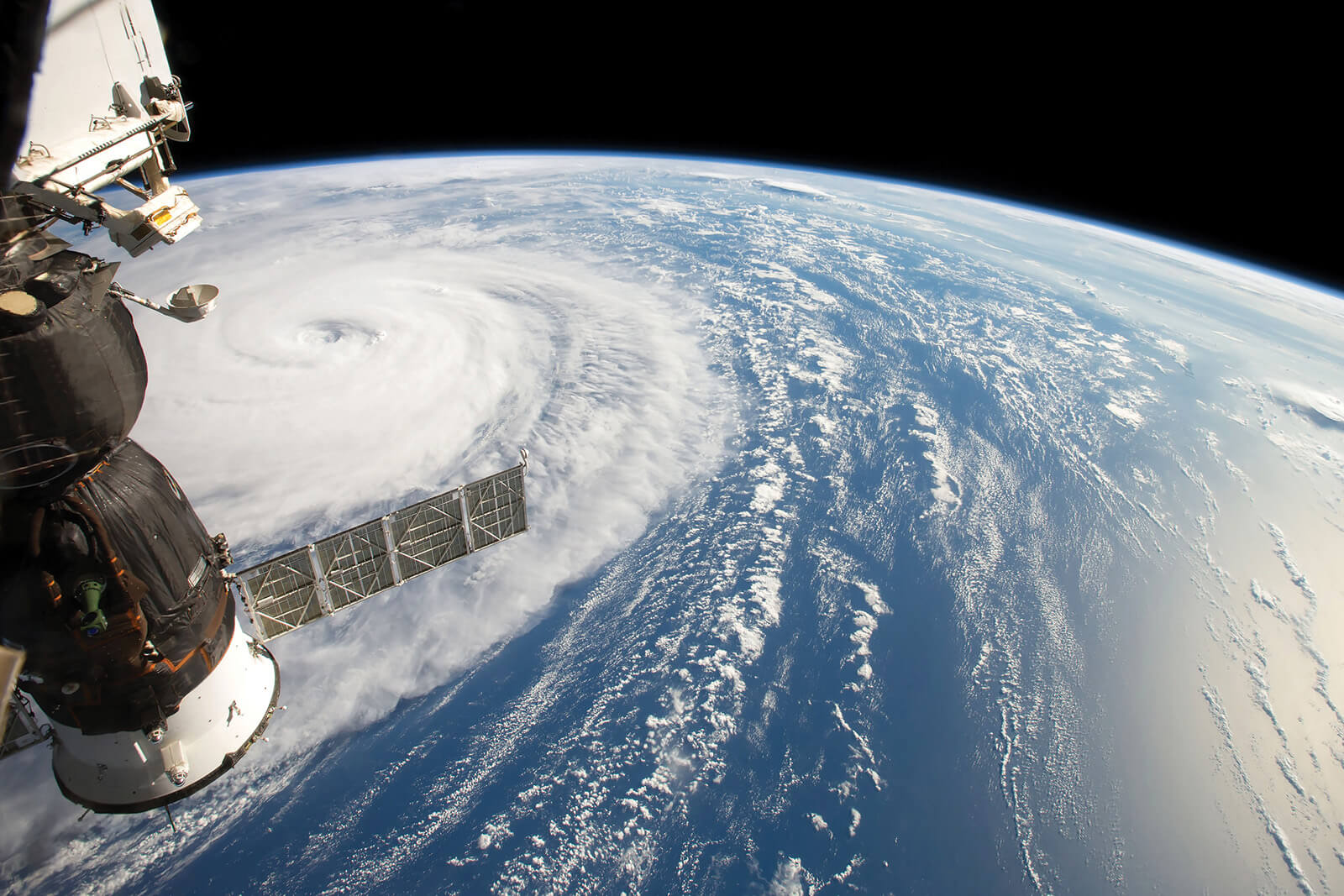

View of Hurricane Harvey from space

In the aftermath of Hurricances Harvey, Irma and Maria (HIM), there was much comment on the disparity among the loss estimates produced by model vendors. Concerns have been raised about significant outlier results released by some modelers.

“It’s no surprise,” explains Dr. Pete Dailey, vice president at RMS, “that vendors who approach the modeling differently will generate different estimates. But rather than pushing back against this, we feel it’s critical to acknowledge and understand these differences.

“At RMS, we develop probabilistic models that operate across the full model space and deliver that insight to our clients. Uncertainty is inherent within the modeling process for any natural hazard, so we can’t rely solely on past events, but rather simulate the full range of plausible future events.”

There are multiple components that contribute to differences in loss estimates, including the scientific approaches and technologies used and the granularity of the exposure data.

“Increased demand for more immediate data is encouraging modelers to push the envelope”

“As modelers, we must be fully transparent in our loss-estimation approach,” he states. “All apply scientific and engineering knowledge to detailed exposure data sets to generate the best possible estimates given the skill of the model. Yet the models always provide a range of opinion when events happen, and sometimes that is wider than expected. Clients must know exactly what steps we take, what data we rely upon, and how we apply the models to produce our estimates as events unfold. Only then can stakeholders conduct the due diligence to effectively understand the reasons for the differences and make important financial decisions accordingly.”

Outlier estimates must also be scrutinized in greater detail. “There were some outlier results during HIM, and particularly for Hurricane Maria. The onus is on the individual modeler to acknowledge the disparity and be fully transparent about the factors that contributed to it. And most importantly, how such disparity is being addressed going forward,” says Dailey.

“A ‘big miss’ in a modeled loss estimate generates market disruption, and without clear explanation this impacts the credibility of all catastrophe models. RMS models performed quite well for Maria. One reason for this was our detailed local knowledge of the building stock and engineering practices in Puerto Rico. We’ve built strong relationships over the years and made multiple visits to the island, and the payoff for us and our client comes when events like Maria happen.”

As client demand for real-time and pre-event estimates grows, the data challenge placed on modelers is increasing.

“Demand for more immediate data is encouraging modelers like RMS to push the scientific envelope,” explains Dailey, “as it should. However, we need to ensure all modelers acknowledge, and to the degree possible quantify, the difficulties inherent in real-time loss estimation — especially since it’s often not possible to get eyes on the ground for days or weeks after a major catastrophe.”

Much has been said about the need for modelers to revise initial estimates months after an event occurs. Dailey acknowledges that while RMS sometimes updates its estimates, during HIM the strength of early estimates was clear.

“In the months following HIM, we didn’t need to significantly revise our initial loss figures even though they were produced when uncertainty levels were at their peak as the storms unfolded in real time,” he states. “The estimates for all three storms were sufficiently robust in the immediate aftermath to stand the test of time. While no one knows what the next event will bring, we’re confident our models and, more importantly, our transparent approach to explaining our estimates will continue to build client confidence.”