Tag: CATASTROPHE

Living in a World of Constant Catastrophes

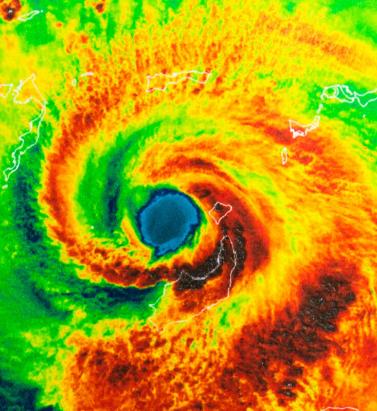

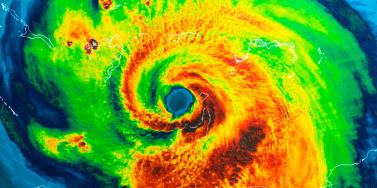

May 20, 2019(Re)insurance companies are waking up to the reality that we are in a riskier world and the prospect of ‘constant catastrophes’ has arrived, with climate change a significant driver In his hotly anticipated annual letter to shareholders in February 2019, Warren Buffett, the CEO of Berkshire Hathaway and acclaimed “Oracle of Omaha,” warned about the prospect of “The Big One” — a major hurricane, earthquake or cyberattack that he predicted would “dwarf Hurricanes Katrina and Michael.” He warned that “when such a mega-catastrophe strikes, we will get our share of the losses and they will be big — very big.” The use of new technology, data and analytics will help us prepare for unpredicted ‘black swan’ events and minimize the catastrophic losses Mohsen Rahnama RMS The question insurance and reinsurance companies need to ask themselves is whether they are prepared for the potential of an intense U.S. landfalling hurricane, a Tōhoku-size earthquake event and a major cyber incident if these types of combined losses hit their portfolio each and every year, says Mohsen Rahnama, chief risk modeling officer at RMS. “We are living in a world of constant catastrophes,” he says. “The risk is changing, and carriers need to make an educated decision about managing the risk. “So how are (re)insurers going to respond to that? The broader perspective should be on managing and diversifying the risk in order to balance your portfolio and survive major claims each year,” he continues. “Technology, data and models can help balance a complex global portfolio across all perils while also finding the areas of opportunity.” A Barrage of Weather Extremes How often, for instance, should insurers and reinsurers expect an extreme weather loss year like 2017 or 2018? The combined insurance losses from natural disasters in 2017 and 2018 according to Swiss Re sigma were US$219 billion, which is the highest-ever total over a two-year period. Hurricanes Harvey, Irma and Maria delivered the costliest hurricane loss for one hurricane season in 2017. Contributing to the total annual insurance loss in 2018 was a combination of natural hazard extremes, including Hurricanes Michael and Florence, Typhoons Jebi, Trami and Mangkhut, as well as heatwaves, droughts, wildfires, floods and convective storms. While it is no surprise that weather extremes like hurricanes and floods occur every year, (re)insurers must remain diligent about how such risks are changing with respect to their unique portfolios. Looking at the trend in U.S. insured losses from 1980–2018, the data clearly shows losses are increasing every year, with climate-related losses being the primary drivers of loss, especially in the last four decades (even allowing for the fact that the completeness of the loss data over the years has improved). Measuring Climate Change With many non-life insurers and reinsurers feeling bombarded by the aggregate losses hitting their portfolios each year, insurance and reinsurance companies have started looking more closely at the impact that climate change is having on their books of business, as the costs associated with weather-related disasters increase. The ability to quantify the impact of climate change risk has improved considerably, both at a macro level and through attribution research, which considers the impact of climate change on the likelihood of individual events. The application of this research will help (re)insurers reserve appropriately and gain more insight as they build diversified books of business. Take Hurricane Harvey as an example. Two independent attribution studies agree that the anthropogenic warming of Earth’s atmosphere made a substantial difference to the storm’s record-breaking rainfall, which inundated Houston, Texas, in August 2017, leading to unprecedented flooding. In a warmer climate, such storms may hold more water volume and move more slowly, both of which lead to heavier rainfall accumulations over land. Attribution studies can also be used to predict the impact of climate change on the return-period of such an event, explains Pete Dailey, vice president of model development at RMS. “You can look at a catastrophic event, like Hurricane Harvey, and estimate its likelihood of recurring from either a hazard or loss point of view. For example, we might estimate that an event like Harvey would recur on average say once every 250 years, but in today’s climate, given the influence of climate change on tropical precipitation and slower moving storms, its likelihood has increased to say a 1-in-100-year event,” he explains. We can observe an incremental rise in sea level annually — it’s something that is happening right in front of our eyes Pete Dailey RMS “This would mean the annual probability of a storm like Harvey recurring has increased more than twofold from 0.4 percent to 1 percent, which to an insurer can have a dramatic effect on their risk management strategy.” Climate change studies can help carriers understand its impact on the frequency and severity of various perils and throw light on correlations between perils and/or regions, explains Dailey. “For a global (re)insurance company with a book of business spanning diverse perils and regions, they want to get a handle on the overall effect of climate change, but they must also pay close attention to the potential impact on correlated events. “For instance, consider the well-known correlation between the hurricane season in the North Atlantic and North Pacific,” he continues. “Active Atlantic seasons are associated with quieter Pacific seasons and vice versa. So, as climate change affects an individual peril, is it also having an impact on activity levels for another peril? Maybe in the same direction or in the opposite direction?” Understanding these “teleconnections” is just as important to an insurer as the more direct relationship of climate to hurricane activity in general, thinks Dailey. “Even though it’s hard to attribute the impact of climate change to a particular location, if we look at the impact on a large book of business, that’s actually easier to do in a scientifically credible way,” he adds. “We can quantify that and put uncertainty around that quantification, thus allowing our clients to develop a robust and objective view of those factors as a part of a holistic risk management approach.” Of course, the influence of climate change is easier to understand and measure for some perils than others. “For example, we can observe an incremental rise in sea level annually — it’s something that is happening right in front of our eyes,” says Dailey. “So, sea-level rise is very tangible in that we can observe the change year over year. And we can also quantify how the rise of sea levels is accelerating over time and then combine that with our hurricane model, measuring the impact of sea-level rise on the risk of coastal storm surge, for instance.” Each peril has a unique risk signature with respect to climate change, explains Dailey. “When it comes to a peril like severe convective storms — tornadoes and hail storms for instance — they are so localized that it’s difficult to attribute climate change to the future likelihood of such an event. But for wildfire risk, there’s high correlation with climate change because the fuel for wildfires is dry vegetation, which in turn is highly influenced by the precipitation cycle.” Satellite data from 1993 through to the present shows there is an upward trend in the rate of sea-level rise, for instance, with the current rate of change averaging about 3.2 millimeters per year. Sea-level rise, combined with increasing exposures at risk near the coastline, means that storm surge losses are likely to increase as sea levels rise more quickly. “In 2010, we estimated the amount of exposure within 1 meter above the sea level, which was US$1 trillion, including power plants, ports, airports and so forth,” says Rahnama. “Ten years later, the exact same exposure was US$2 trillion. This dramatic exposure change reflects the fact that every centimeter of sea-level rise is subjected to a US$2 billion loss due to coastal flooding and storm surge as a result of even small hurricanes. “And it’s not only the climate that is changing,” he adds. “It’s the fact that so much building is taking place along the high-risk coastline. As a result of that, we have created a built-up environment that is actually exposed to much of the risk.” Rahnama highlighted that because of an increase in the frequency and severity of events, it is essential to implement prevention measures by promoting mitigation credits to minimize the risk. He says: “How can the market respond to the significant losses year after year. It is essential to think holistically to manage and transfer the risk to the insurance chain from primary to reinsurance, capital market, ILS, etc.,” he continues. “The art of risk management, lessons learned from past events and use of new technology, data and analytics will help to prepare for responding to unpredicted ‘black swan’ type of events and being able to survive and minimize the catastrophic losses.” Strategically, risk carriers need to understand the influence of climate change whether they are global reinsurers or local primary insurers, particularly as they seek to grow their business and plan for the future. Mergers and acquisitions and/or organic growth into new regions and perils will require an understanding of the risks they are taking on and how these perils might evolve in the future. There is potential for catastrophe models to be used on both sides of the balance sheet as the influence of climate change grows. Dailey points out that many insurance and reinsurance companies invest heavily in real estate assets. “You still need to account for the risk of climate change on the portfolio, whether you’re insuring properties or whether you actually own them, there’s no real difference.” In fact, asset managers are more inclined to a longer-term view of risk when real estate is part of a long-term investment strategy. Here, climate change is becoming a critical part of that strategy. “What we have found is that often the team that handles asset management within a (re)insurance company is an entirely different team to the one that handles catastrophe modeling,” he continues. “But the same modeling tools that we develop at RMS can be applied to both of these problems of managing risk at the enterprise level. “In some cases, a primary insurer may have a one-to-three-year plan, while a major reinsurer may have a five-to-10-year view because they’re looking at a longer risk horizon,” he adds. “Every time I go to speak to a client — whether it be about our U.S. Inland Flood HD Model or our North America Hurricane Models — the question of climate change inevitably comes up. So, it’s become apparent this is no longer an academic question, it’s actually playing into critical business decisions on a daily basis.” Preparing for a Low-carbon Economy Regulation also has an important role in pushing both (re)insurers and large corporates to map and report on the likely impact of climate change on their business, as well as explain what steps they have taken to become more resilient. In the U.K., the Prudential Regulation Authority (PRA) and Bank of England have set out their expectations regarding firms’ approaches to managing the financial risks from climate change. Meanwhile, a survey carried out by the PRA found that 70 percent of U.K. banks recognize the risk climate change poses to their business. Among their concerns are the immediate physical risks to their business models — such as the exposure to mortgages on properties at risk of flood and exposure to countries likely to be impacted by increasing weather extremes. Many have also started to assess how the transition to a low-carbon economy will impact their business models and, in many cases, their investment and growth strategy. “Financial policymakers will not drive the transition to a low-carbon economy, but we will expect our regulated firms to anticipate and manage the risks associated with that transition,” said Bank of England Governor Mark Carney, in a statement. The transition to a low-carbon economy is a reality that (re)insurance industry players will need to prepare for, with the impact already being felt in some markets. In Australia, for instance, there is pressure on financial institutions to withdraw their support from major coal projects. In the aftermath of the Townsville floods in February 2019 and widespread drought across Queensland, there have been renewed calls to boycott plans for Australia’s largest thermal coal mine. To date, 10 of the world’s largest (re)insurers have stated they will not provide property or construction cover for the US$15.5 billion Carmichael mine and rail project. And in its “Mining Risk Review 2018,” broker Willis Towers Watson warned that finding insurance for coal “is likely to become increasingly challenging — especially if North American insurers begin to follow the European lead.”

The Flames Burn Higher

May 20, 2019With California experiencing two of the most devastating seasons on record in consecutive years, EXPOSURE asks whether wildfire now needs to be considered a peak peril Some of the statistics for the 2018 U.S. wildfire season appear normal. The season was a below-average year for the number of fires reported — 58,083 incidents represented only 84 percent of the 10-year average. The number of acres burned — 8,767,492 acres — was marginally above average at 132 percent. Two factors, however, made it exceptional. First, for the second consecutive year, the Great Basin experienced intense wildfire activity, with some 2.1 million acres burned — 233 percent of the 10-year average. And second, the fires destroyed 25,790 structures, with California accounting for over 23,600 of the structures destroyed, compared to a 10-year U.S. annual average of 2,701 residences, according to the National Interagency Fire Center. As of January 28, 2019, reported insured losses for the November 2018 California wildfires, which included the Camp and Woolsey Fires, were at US$11.4 billion, according to the California Department of Insurance. Add to this the insured losses of US$11.79 billion reported in January 2018 for the October and December 2017 California events, and these two consecutive wildfire seasons constitute the most devastating on record for the wildfire-exposed state. Reaching its Peak? Such colossal losses in consecutive years have sent shockwaves through the (re)insurance industry and are forcing a reassessment of wildfire’s secondary status in the peril hierarchy. According to Mark Bove, natural catastrophe solutions manager at Munich Reinsurance America, wildfire’s status needs to be elevated in highly exposed areas. “Wildfire should certainly be considered a peak peril in areas such as California and the Intermountain West,” he states, “but not for the nation as a whole.” His views are echoed by Chris Folkman, senior director of product management at RMS. “Wildfire can no longer be viewed purely as a secondary peril in these exposed territories,” he says. “Six of the top 10 fires for structural destruction have occurred in the last 10 years in the U.S., while seven of the top 10, and 10 of the top 20 most destructive wildfires in California history have occurred since 2015. The industry now needs to achieve a level of maturity with regard to wildfire that is on a par with that of hurricane or flood.” “Average ember contributions to structure damage and destruction is approximately 15 percent, but in a wind-driven event such as the Tubbs Fire this figure is much higher” Chris Folkman RMS However, he is wary about potential knee-jerk reactions to this hike in wildfire-related losses. “There is a strong parallel between the 2017-18 wildfire seasons and the 2004-05 hurricane seasons in terms of people’s gut instincts. 2004 saw four hurricanes make landfall in Florida, with K-R-W causing massive devastation in 2005. At the time, some pockets of the industry wondered out loud if parts of Florida were uninsurable. Yet the next decade was relatively benign in terms of hurricane activity. “The key is to adopt a balanced, long-term view,” thinks Folkman. “At RMS, we think that fire severity is here to stay, while the frequency of big events may remain volatile from year-to-year.” A Fundamental Re-evaluation The California losses are forcing (re)insurers to overhaul their approach to wildfire, both at the individual risk and portfolio management levels. “The 2017 and 2018 California wildfires have forced one of the biggest re-evaluations of a natural peril since Hurricane Andrew in 1992,” believes Bove. “For both California wildfire and Hurricane Andrew, the industry didn’t fully comprehend the potential loss severities. Catastrophe models were relatively new and had not gained market-wide adoption, and many organizations were not systematically monitoring and limiting large accumulation exposure in high-risk areas. As a result, the shocks to the industry were similar.” For decades, approaches to underwriting have focused on the wildland-urban interface (WUI), which represents the area where exposure and vegetation meet. However, exposure levels in these areas are increasing sharply. Combined with excessive amounts of burnable vegetation, extended wildfire seasons, and climate-change-driven increases in temperature and extreme weather conditions, these factors are combining to cause a significant hike in exposure potential for the (re)insurance industry. A recent report published in PNAS entitled “Rapid Growth of the U.S. Wildland-Urban Interface Raises Wildfire Risk” showed that between 1990 and 2010 the new WUI area increased by 72,973 square miles (189,000 square kilometers) — larger than Washington State. The report stated: “Even though the WUI occupies less than one-tenth of the land area of the conterminous United States, 43 percent of all new houses were built there, and 61 percent of all new WUI houses were built in areas that were already in the WUI in 1990 (and remain in the WUI in 2010).” “The WUI has formed a central component of how wildfire risk has been underwritten,” explains Folkman, “but you cannot simply adopt a black-and-white approach to risk selection based on properties within or outside of the zone. As recent losses, and in particular the 2017 Northern California wildfires, have shown, regions outside of the WUI zone considered low risk can still experience devastating losses.” For Bove, while focus on the WUI is appropriate, particularly given the Coffey Park disaster during the 2017 Tubbs Fire, there is not enough focus on the intermix areas. This is the area where properties are interspersed with vegetation. “In some ways, the wildfire risk to intermix communities is worse than that at the interface,” he explains. “In an intermix fire, you have both a wildfire and an urban conflagration impacting the town at the same time, while in interface locations the fire has largely transitioned to an urban fire. “In an intermix community,” he continues, “the terrain is often more challenging and limits firefighter access to the fire as well as evacuation routes for local residents. Also, many intermix locations are far from large urban centers, limiting the amount of firefighting resources immediately available to start combatting the blaze, and this increases the potential for a fire in high-wind conditions to become a significant threat. Most likely we’ll see more scrutiny and investigation of risk in intermix towns across the nation after the Camp Fire’s decimation of Paradise, California.” Rethinking Wildfire Analysis According to Folkman, the need for greater market maturity around wildfire will require a rethink of how the industry currently analyzes the exposure and the tools it uses. “Historically, the industry has relied primarily upon deterministic tools to quantify U.S. wildfire risk,” he says, “which relate overall frequency and severity of events to the presence of fuel and climate conditions, such as high winds, low moisture and high temperatures.” While such tools can prove valuable for addressing “typical” wildland fire events, such as the 2017 Thomas Fire in Southern California, their flaws have been exposed by other recent losses. Burning Wildfire at Sunset “Such tools insufficiently address major catastrophic events that occur beyond the WUI into areas of dense exposure,” explains Folkman, “such as the Tubbs Fire in Northern California in 2017. Further, the unprecedented severity of recent wildfire events has exposed the weaknesses in maintaining a historically based deterministic approach.” While the scale of the 2017-18 losses has focused (re)insurer attention on California, companies must also recognize the scope for potential catastrophic wildfire risk extends beyond the boundaries of the western U.S. “While the frequency and severity of large, damaging fires is lower outside California,” says Bove, “there are many areas where the risk is far from negligible.” While acknowledging that the broader western U.S. is seeing increased risk due to WUI expansion, he adds: “Many may be surprised that similar wildfire risk exists across most of the southeastern U.S., as well as sections of the northeastern U.S., like in the Pine Barrens of southern New Jersey.” As well as addressing the geographical gaps in wildfire analysis, Folkman believes the industry must also recognize the data gaps limiting their understanding. “There are a number of areas that are understated in underwriting practices currently, such as the far-ranging impacts of ember accumulations and their potential to ignite urban conflagrations, as well as vulnerability of particular structures and mitigation measures such as defensible space and fire-resistant roof coverings.” In generating its US$9 billion to US$13 billion loss estimate for the Camp and Woolsey Fires, RMS used its recently launched North America Wildfire High-Definition (HD) Models to simulate the ignition, fire spread, ember accumulations and smoke dispersion of the fires. “In assessing the contribution of embers, for example,” Folkman states, “we modeled the accumulation of embers, their wind-driven travel and their contribution to burn hazard both within and beyond the fire perimeter. Average ember contributions to structure damage and destruction is approximately 15 percent, but in a wind-driven event such as the Tubbs Fire this figure is much higher. This was a key factor in the urban conflagration in Coffey Park.” The model also provides full contiguous U.S. coverage, and includes other model innovations such as ignition and footprint simulations for 50,000 years, flexible occurrence definitions, smoke and evacuation loss across and beyond the fire perimeter, and vulnerability and mitigation measures on which RMS collaborated with the Insurance Institute for Business & Home Safety. Smoke damage, which leads to loss from evacuation orders and contents replacement, is often overlooked in risk assessments, despite composing a tangible portion of the loss, says Folkman. “These are very high-frequency, medium-sized losses and must be considered. The Woolsey Fire saw 260,000 people evacuated, incurring hotel, meal and transport-related expenses. Add to this smoke damage, which often results in high-value contents replacement, and you have a potential sea of medium-sized claims that can contribute significantly to the overall loss.” A further data resolution challenge relates to property characteristics. While primary property attribute data is typically well captured, believes Bove, many secondary characteristics key to wildfire are either not captured or not consistently captured. “This leaves the industry overly reliant on both average model weightings and risk scoring tools. For example, information about defensible spaces, roofing and siding materials, protecting vents and soffits from ember attacks, these are just a few of the additional fields that the industry will need to start capturing to better assess wildfire risk to a property.” A Highly Complex Peril Bove is, however, conscious of the simple fact that “wildfire behavior is extremely complex and non-linear.” He continues: “While visiting Paradise, I saw properties that did everything correct with regard to wildfire mitigation but still burned and risks that did everything wrong and survived. However, mitigation efforts can improve the probability that a structure survives.” “With more data on historical fires,” Folkman concludes, “more research into mitigation measures and increasing awareness of the risk, wildfire exposure can be addressed and managed. But it requires a team mentality, with all parties — (re)insurers, homeowners, communities, policymakers and land-use planners — all playing their part.”

Beware the Private Catastrophe

July 25, 2016Having a poor handle on the exposure on their books can result in firms facing disproportionate losses relative to their peers following a catastrophic event, but is easily avoidable, says Shaheen Razzaq, senior director – product management, at RMS. The explosions at Tianjin port, the floods in Thailand and most recently the Fort McMurray wildfires in Canada. What these major events have in common is the disproportionate impact of losses incurred by certain firms’ portfolios. Take the Thai floods in 2011, an event which, at the time, was largely unmodeled. The floods that inundated several major industrial estates around Bangkok caused an accumulation of losses for some reinsurers, resulting in negative rating action, loss in share price and withdrawals from the market. Last year’s Tianjin Port explosions in China also resulted in substantial insurance losses, which had an outsized impact on some firms, with significant concentrations of risk at the port or within impacted supply chains. The insured property loss from Asia’s most expensive human-caused catastrophe and the marine industry’s biggest loss since Superstorm Sandy is thought to be as high as US$3.5 billion, with significant “cost creep” as a result of losses from business interruption and contingent business interruption, clean-up and contamination expenses. “While events such as the Tianjin port explosions, Thai floods and more recent Fort McMurray wildfires may have occurred in so-called industry ‘cold spots,’ the impact of such events can be evaluated using deterministic scenarios to stress test a firm’s book of business.” Some of the highest costs from Tianjin were suffered by European firms, with some firms experiencing losses reaching US$275 million. The event highlighted the significant accumulation risk to non-modeled, man-made events in large transportation hubs such as ports, where much of the insurable content (cargo) is mobile and changeable and requires a deeper understanding of the exposures. Speaking about the firm’s experience in an interview with Bloomberg in early 2016, Zurich Insurance Group chairman and acting CEO Tom de Swann noted how due to the accumulation of risk that had not been sufficiently detected, the firm was looking at ways to strengthen its exposure management to avoid such losses in the future. There is a growing understanding that firms can avoid suffering disproportionate impacts from catastrophic events by taking a more analytical approach to mapping the aggregation risk within their portfolios. According to Validus chairman and CEO Ed Noonan, in statements following Tianjin last year, it is now “unacceptable” for the marine insurance industry not to seek to improve its modeling of risk in complex, ever-changing port environments. Women carrying sandbags to protect ancient ruins in Ayuttaya, Thailand during the seasonal monsoon flooding. While events such as the Tianjin port explosions, Thai floods and more recent Fort McMurray wildfires may have occurred in so-called industry “cold spots,” the impact of such events can be evaluated using deterministic scenarios to stress test a firm’s book of business. This can either provide a view of risk where there is a gap in probabilistic model coverage or supplement the view of risk from probabilistic models. Although much has been written about Nassim Taleb’s highly improbable “black swan” events, in a global and interconnected world firms’ increasingly must contend with the reality of “grey swan” and “white swan” events. According to risk consultant Geary Sikich in his article, “Are We Seeing the Emergence of More White Swan Events?” the definition of a grey swan is “a highly probable event with three principal characteristics: It is predictable; it carries an impact that can easily cascade…and, after the fact, we shift the focus to errors in judgment or some other human form of causation.” A white swan is a “highly certain event” with “an impact that can easily be estimated” where, once again, after the fact there is a shift to focus on “errors in judgment.” “Addressing unpredictability requires that we change how Enterprise Risk Management programs operate,” states Sikich. “Forecasts are often based on a “static” moment; frozen in time, so to speak…. Assumptions, on the other hand, depend on situational analysis and the ongoing tweaking via assessment of new information. An assumption can be changed and adjusted as new information becomes available.” “Best-in-class exposure management analytics is all about challenging assumptions and using disaster scenarios to test how your portfolio would respond if a major event were to occur in a non-modeled peril region.” It is clear Sikich’s observations on unpredictability are becoming the new normal in the industry. Firms are investing to fully entrench strong exposure management practices across their entire enterprise to protect against private catastrophes. They are also reaping other benefits from this type of investment: Sophisticated exposure management tools are not just designed to help firms better manage their risks and exposures, but also to identify new areas of opportunity. By gaining a deeper understanding of their global portfolio across all regions and perils, firms are able to make more informed strategic decisions when looking to grow their business. In specific regions for certain perils, firms’ can use exposure-based analytics to contextualize their modeled loss results. This allows them to “what if” on the range of possible deterministic losses so they can stress test their portfolio against historical benchmarks, look for sensitivities and properly set expectations. Exposure Management Analytics Best-in-class exposure management analytics is all about challenging assumptions and using disaster scenarios to test how your portfolio would respond if a major event were to occur in a non-modeled peril region. Such analytics can identify the pinch points – potential accumulations both within and across classes of business – that may exist while also offering valuable information on where to grow your business. Whether it is through M&A or organic growth, having a better grasp of exposure across your portfolio enables strategic decision-making and can add value to a book of business. The ability to analyze exposure across the entire organization and understand how it is likely to impact accumulations and loss potential is a powerful tool for today’s C-suite. Exposure management tools enable firms to understand the risk in their business today but also how changes can impact their portfolio – whether acquiring a book, moving into new territories or divesting a nonperforming book of business.