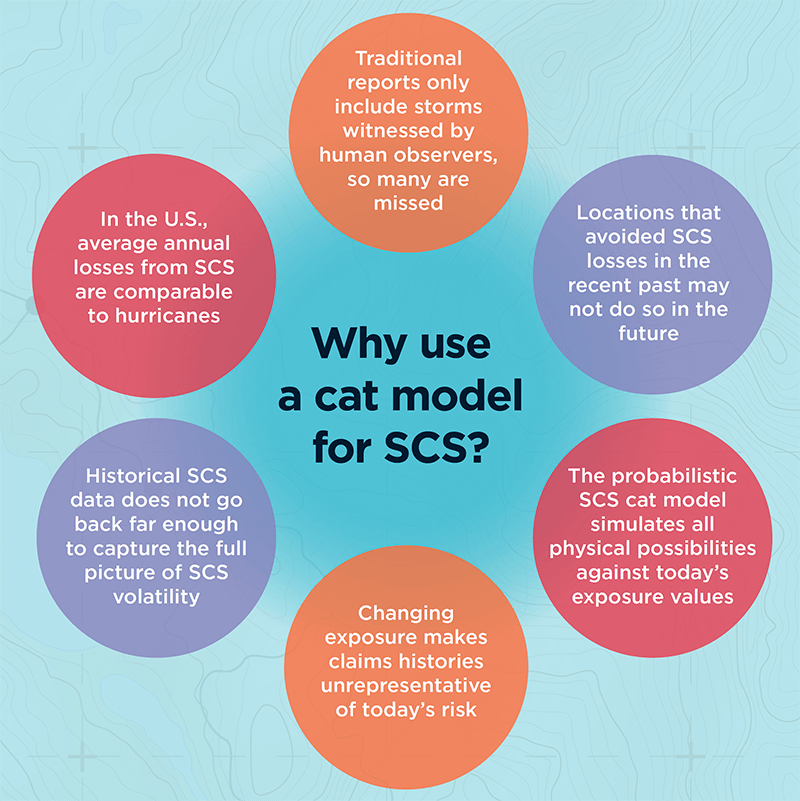

Severe convective storms can strike with little warning across vast areas of the planet, yet some insurers still rely solely on historical records that do not capture the full spectrum of risk at given locations. EXPOSURE explores the limitations of this approach and how they can be overcome with cat modeling

Attritional and high-severity claims from severe convective storms (SCS) — tornadoes, hail, straight-line winds and lightning — are on the rise. In fact, in the U.S., average annual insured losses (AAL) from SCS now rival even those from hurricanes, at around US$17 billion, according to the latest RMS U.S. SCS Industry Loss Curve from 2018. In Canada, SCS cost insurers more than any other natural peril on average each year.

Despite the scale of the threat, it is often overlooked as a low volatility, attritional peril

Christopher Allen

RMS

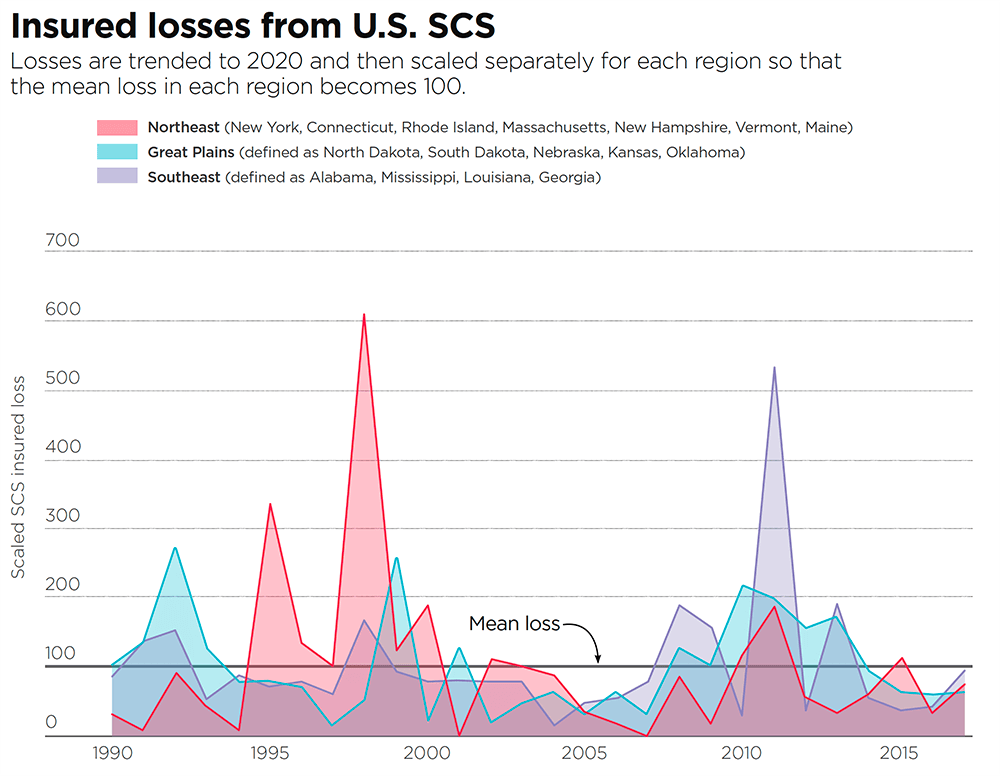

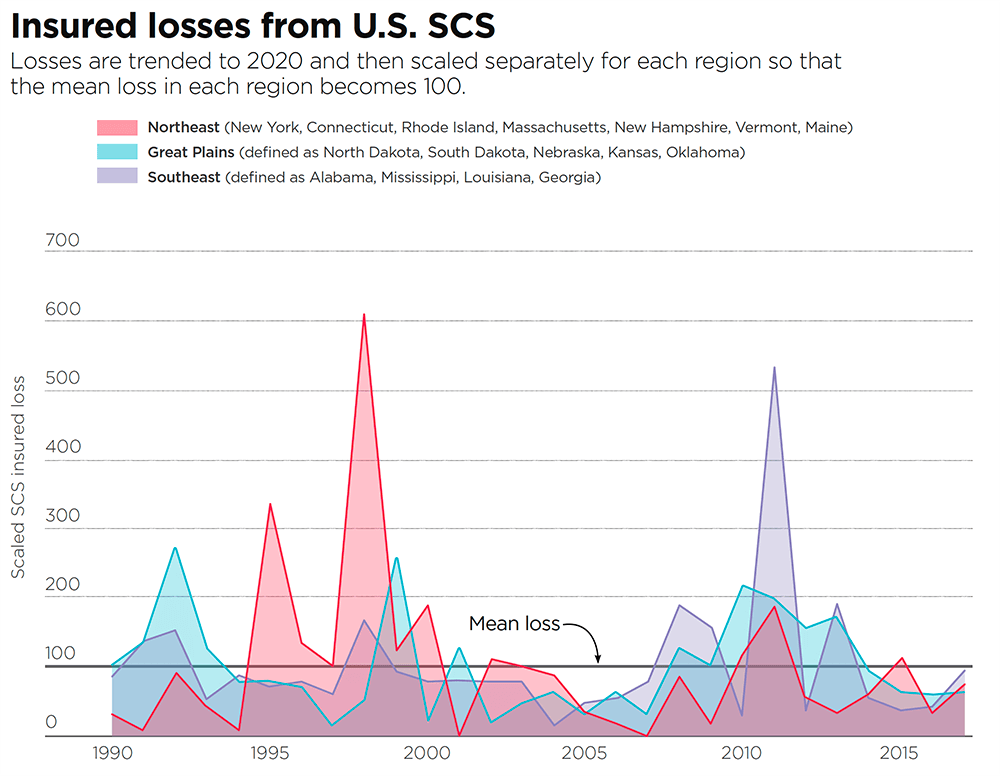

“Despite the scale of the threat, it is often overlooked as a low volatility, attritional peril,” says Christopher Allen, product manager for the North American SCS and winterstorm models at RMS. But losses can be very volatile, particularly when considering individual geographic regions or portfolios (see Figure 1). Moreover, they can be very high. “The U.S. experiences higher insured losses from SCS than any other country. According to the National Weather Service Storm Prediction Center, there over 1,000 tornadoes every year on average. But while a powerful tornado does not cause the same total damage as a major earthquake or hurricane, these events are still capable of causing catastrophic losses that run into the billions.”

Figure 1: Insured losses from U.S. SCS in the Northeast (New York, Connecticut, Rhode Island, Massachusetts, New Hampshire, Vermont, Maine), Great Plains (North Dakota, South Dakota, Nebraska, Kansas, Oklahoma) and Southeast (Alabama, Mississippi, Louisiana, Georgia). Losses are trended to 2020 and then scaled separately for each region so the mean loss in each region becomes 100. Source: Industry Loss Data

Two of the costliest SCS outbreaks to date hit the U.S. in spring 2011. In late April, large hail, straight-line winds and over 350 tornadoes spawned across wide areas of the South and Midwest, including over the cities of Tuscaloosa and Birmingham, Alabama, which were hit by a tornado rating EF-4 on the Enhanced Fujita (EF) scale. In late May, an outbreak of several hundred more tornadoes occurred over a similarly wide area, including an EF-5 tornado in Joplin, Missouri, that killed over 150 people. If the two outbreaks occurred again today, according to an RMS estimate based on trending industry loss data, each would easily cause over US$10 billion of insured loss.

However, extreme losses from SCS do not just occur in the U.S. In April 1999, a hailstorm in Sydney dropped hailstones of up to 3.5 inches (9 centimeters) in diameter over the city, causing insured losses of AU$5.6 billion according to the Insurance Council of Australia (ICA), currently the most costly insurance event in Australia’s history [1].

“It is entirely possible we will soon see claims in excess of US$10 billion from a single SCS event,” Allen says, warning that relying on historical data alone to quantify SCS (re)insurance risk leaves carriers underprepared and overexposed.

Historical Records are Short and Biased

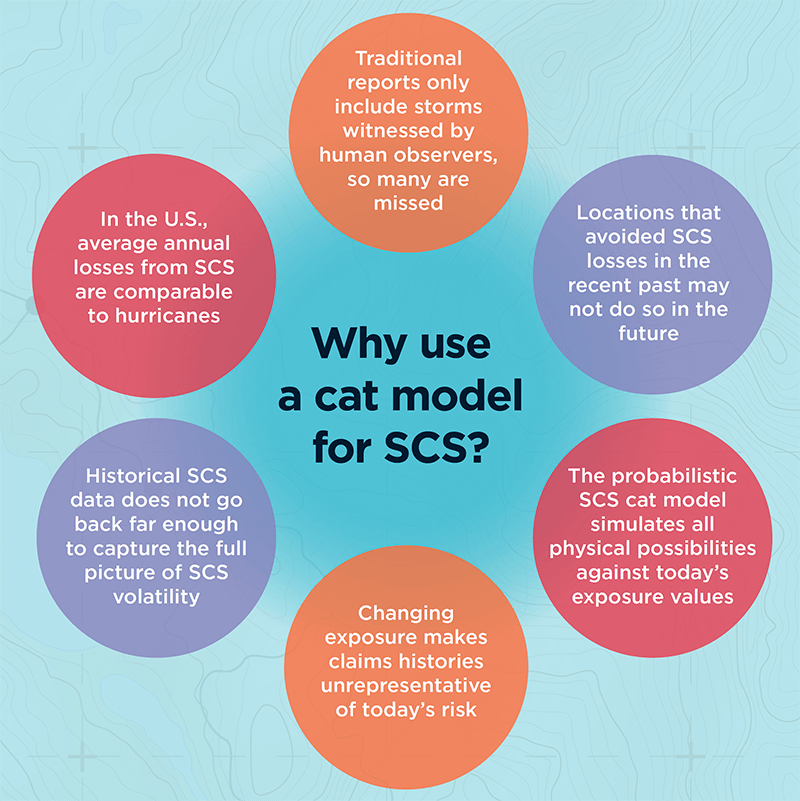

According to Allen, the rarity of SCS at a local level means historical weather and loss data fall short of fully characterizing SCS hazard. In the U.S., the Storm Prediction Center’s national record of hail and straight-line wind reports goes back to 1955, and tornado reports date back to 1950. In Canada, routine tornado reports go back to 1980. “These may seem like adequate records, but they only scratch the surface of the many SCS scenarios nature can throw at us,” Allen says.

“To capture full SCS variability at a given location, records should be simulated over thousands, not tens, of years,” he explains. “This is only possible using a cat model that simulates a very wide range of possible storms to give a fuller representation of the risk at that location. Observed over tens of thousands of years, most locations would have been hit by SCS just as frequently as their neighbors, but this will never be reflected in the historical records. Just because a town or city has not been hit by a tornado in recent years doesn’t mean it can’t be.”

To capture full SCS variability at a given location, records should be simulated over thousands, not tens, of years

Shorter historical records could also misrepresent the severity of SCS possible at a given location. Total insured catastrophe losses in Phoenix, Arizona, for example, were typically negligible between 1990 and 2009, but on October 5, 2010, Phoenix was hit by its largest-ever tornado and hail outbreak, causing economic losses of US$4.5 billion. (Source: NOAA National Centers for Environmental Information)

Just like the national observations, insurers’ own claims histories, or industry data such as presented in Figure 1, are also too short to capture the full extent of SCS volatility, Allen warns. “Some primary insurers write very large volumes of natural catastrophe business and have comprehensive claims records dating back 20 or so years, which are sometimes seen as good enough datasets on which to evaluate the risk at their insured locations. However, underwriting based solely on this length of experience could lead to more surprises and greater earnings instability.”

If a Tree Falls and No One Hears…

Historical SCS records in most countries rely primarily on human observation reports. If a tornado is not seen, it is not reported, which means that unlike a hurricane or large earthquake it is possible to miss SCS in the recent historical record. “While this happens less often in Europe, which has a high population density, missed sightings can distort historical data in Canada, Australia and remote parts of the U.S.,” Allen explains.

Another key issue is that the EF scale rates tornado strength based on how much damage is caused, but this does not always reflect the power of the storm. If a strong tornado occurs in a rural area with few buildings, for example, it won’t register high on the EF scale, even though it could have caused major damage to an urban area.

“This again makes the historical record very challenging to interpret,” he says. “Catastrophe modelers invest a great deal of time and effort in understanding the strengths and weaknesses of historical data. By using robust aspects of observations in conjunction with other methods, for example numerical weather simulations, they are able to build upon and advance beyond what experience tells us, allowing for more credible evaluation of SCS risk than using experience alone.”

Then there is the issue of rising exposures. Urban expansion and rising property prices, in combination with factors such as rising labor costs and aging roofs that are increasingly susceptible to damage, are pushing exposure values upward.

“This means that an identical SCS in the same location would most likely result in a higher loss today than 20 years ago, or in some cases may result in an insured loss where previously there would have been none,” Allen explains.

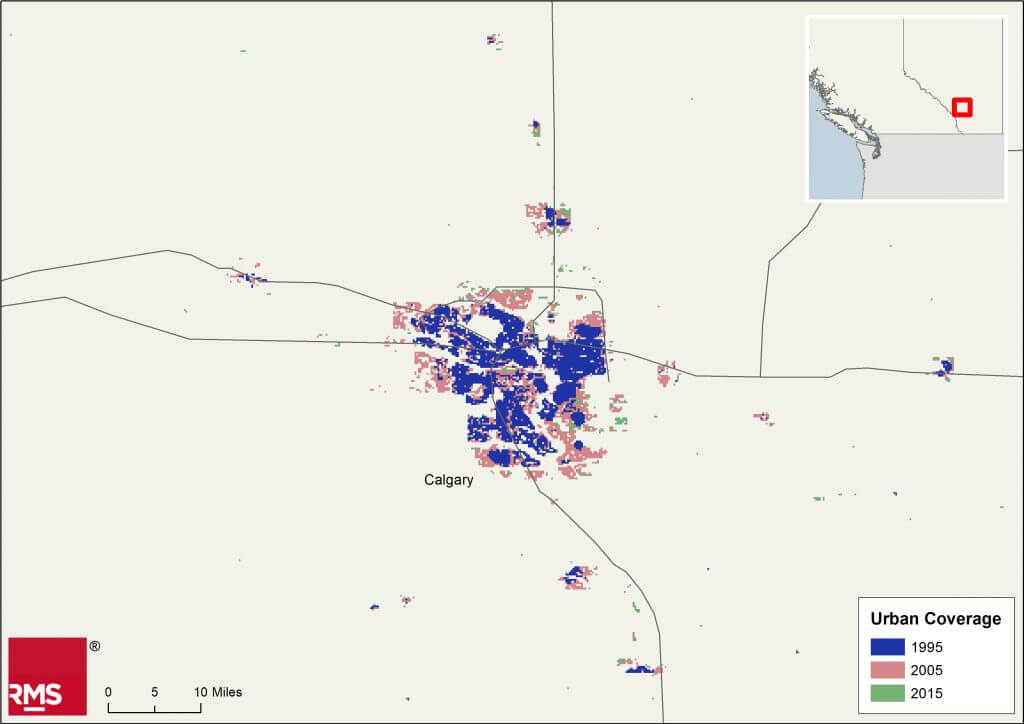

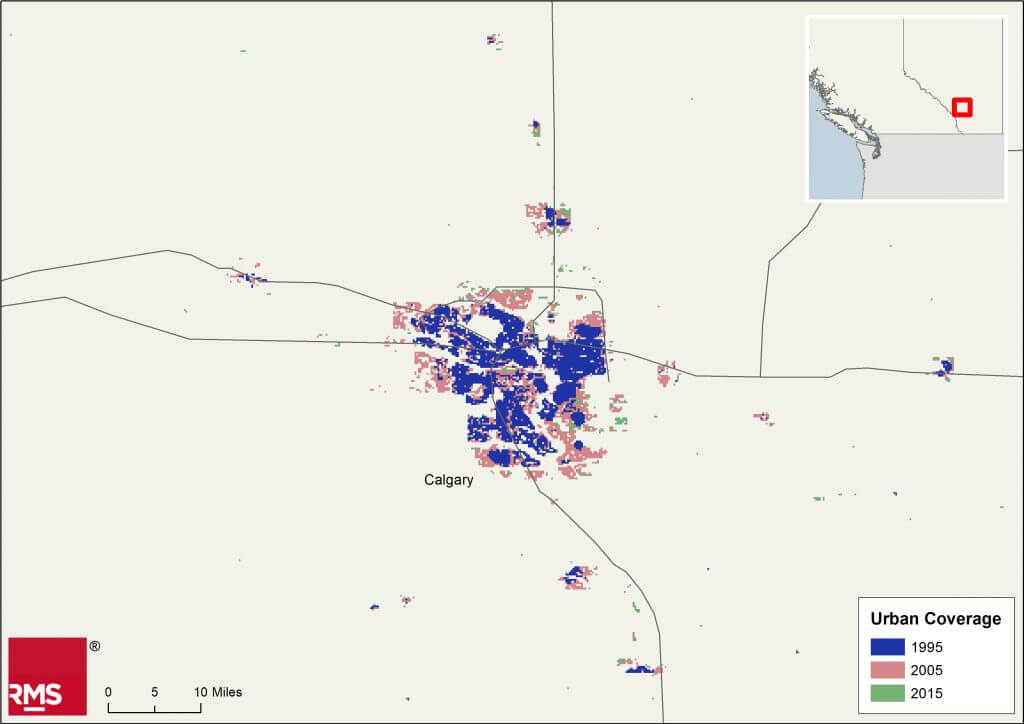

Calgary, Alberta, for example, is the hailstorm capital of Canada. On September 7, 1991, a major hailstorm over the city resulted in the country’s largest insured loss to date from a single storm: CA$343 million was paid out at the time. The city has of course expanded significantly since then (see Figure 2), and the value of the exposure in preexisting urban areas has also increased. An identical hailstorm occurring over the city today would therefore cause far larger insured losses, even without considering inflation.

Figure 2: Urban expansion in Calgary, Alberta, Canada. European Space Agency. Land Cover CCI Product User Guide Version 2. Tech. Rep. (2017). Available at: maps.elie.ucl.ac.be/CCI/viewer/download/ESACCI-LC-Ph2-PUGv2_2.0.pdf

“Probabilistic SCS cat modeling addresses these issues,” Allen says. “Rather than being constrained by historical data, the framework builds upon and beyond it using meteorological, engineering and insurance knowledge to evaluate what is physically possible today. This means claims do not have to be ‘on-leveled’ to account for changing exposures, which may require the user to make some possibly tenuous adjustments and extrapolations; users simply input the exposures they have today and the model outputs today’s risk.”

The Catastrophe Modeling Approach

In addition to their ability to simulate “synthetic” loss events over thousands of years, Allen argues, cat models make it easier to conduct sensitivity testing by location, varying policy terms or construction classes; to drill into loss-driving properties within portfolios; and to optimize attachment points for reinsurance programs. SCS cat models are commonly used in the reinsurance market, partly because they make it easy to assess tail risk (again, difficult to do using a short historical record alone), but they are currently used less frequently for underwriting primary risks. There are instances of carriers that use catastrophe models for reinsurance business but still rely on historical claims data for direct insurance business.

So why do some primary insurers not take advantage of the cat modeling approach? “Though not marketwide, there can be a perception that experience alone represents the full spectrum of SCS risk — and this overlooks the historical record’s limitations, potentially adding unaccounted-for risk to their portfolios,” Allen says. What is more, detailed studies of historical records and claims “on-leveling” to account for changes over time are challenging and very time-consuming. By contrast, insurers who are already familiar with the cat modeling framework (for example, for hurricane) should find that switching to a probabilistic SCS model is relatively simple and requires little additional learning from the user, as the model employs the same framework as for other peril models, he explains.

A US$10 billion SCS loss is around the corner, and carriers need to be prepared and have at their disposal the ability to calculate the probability of that occurring for any given location

Furthermore, catastrophe model data formats, such as the RMS Exposure and Results Data Modules (EDM and RDM), are already widely exchanged, and now the Risk Data Open Standard™ (RDOS) will have increasing value within the (re)insurance industry. Reinsurance brokers make heavy use of cat modeling submissions when placing reinsurance, for example, while rating agencies increasingly request catastrophe modeling results when determining company credit ratings.

Allen argues that with property cat portfolios under pressure and the insurance market now hardening, it is all the more important that insurers select and price risks as accurately as possible to ensure they increase profits and reduce their combined ratios.

“A US$10 billion SCS loss is around the corner, and carriers need to be prepared and have at their disposal the ability to calculate the probability of that occurring for any given location,” he says. “To truly understand their exposure, risk must be determined based on all possible tomorrows, in addition to what has happened in the past.”

[1] Losses normalized to 2017 Australian dollars and exposure by the ICA. Source: https://www.icadataglobe.com/access-catastrophe-data.

To obtain a holistic view of severe weather risk contact the RMS team here