The extreme conditions of 2017 demonstrated the need for much greater data resolution on wildfire in North America

The 2017 California wildfire season was record-breaking on virtually every front. Some 1.25 million acres were torched by over 9,000 wildfire events during the period, with October to December seeing some of the most devastating fires ever recorded in the region*.

From an insurance perspective, according to the California Department of Insurance, as of January 31, 2018, insurers had received almost 45,000 claims relating to losses in the region of US$11.8 billion. These losses included damage or total loss to over 30,000 homes and 4,300 businesses.

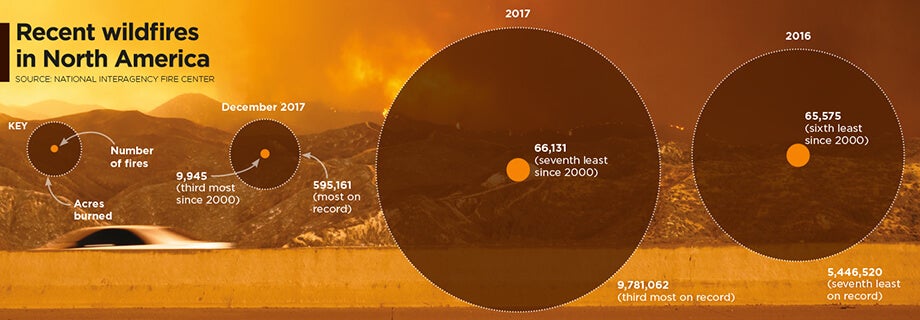

On a countrywide level, the total was over 66,000 wildfires that burned some 9.8 million acres across North America, according to the National Interagency Fire Center. This compares to 2016 when there were 65,575 wildfires and 5.4 million acres burned.

Caught off Guard

“2017 took us by surprise,” says Tania Schoennagel, research scientist at the University of Colorado, Boulder. “Unlike conditions now [March 2018], 2017 winter and early spring were moist with decent snowpack and no significant drought recorded.”

Yet despite seemingly benign conditions, it rapidly became the third-largest wildfire year since 1960, she explains. “This was primarily due to rapid warming and drying in the late spring and summer of 2017, with parts of the West witnessing some of the driest and warmest periods on record during the summer and remarkably into the late fall.

“Additionally, moist conditions in early spring promoted build-up of fine fuels which burn more easily when hot and dry,” continues Schoennagel. “This combination rapidly set up conditions conducive to burning that continued longer than usual, making for a big fire year.”

While Southern California has experienced major wildfire activity in recent years, until 2017 Northern California had only experienced “minor-to-moderate” events, according to Mark Bove, research meteorologist, risk accumulation, Munich Reinsurance America, Inc.

“In fact, the region had not seen a major, damaging fire outbreak since the Oakland Hills firestorm in 1991, a US$1.7 billion loss at the time,” he explains. “Since then, large damaging fires have repeatedly scorched parts of Southern California, and as a result much of the industry has focused on wildfire risk in that region due to the higher frequency and due to the severity of recent events.

“Although the frequency of large, damaging fires may be lower in Northern California than in the southern half of the state,” he adds, “the Wine Country fires vividly illustrated not only that extreme loss events are possible in both locales, but that loss magnitudes can be larger in Northern California. A US$11 billion wildfire loss in Napa and Sonoma counties may not have been on the radar screen for the insurance industry prior to 2017, but such losses are now.”

Smoke on the Horizon

Looking ahead, it seems increasingly likely that such events will grow in severity and frequency as climate-related conditions create drier, more fire-conducive environments in North America.

“Since 1985, more than 50 percent of the increase in the area burned by wildfire in the forests of the Western U.S. has been attributed to anthropogenic climate change,” states Schoennagel. “Further warming is expected, in the range of 2 to 4 degrees Fahrenheit in the next few decades, which will spark ever more wildfires, perhaps beyond the ability of many Western communities to cope.”

“Climate change is causing California and the American Southwest to be warmer and drier, leading to an expansion of the fire season in the region,” says Bove. “In addition, warmer temperatures increase the rate of evapotranspiration in plants and evaporation of soil moisture. This means that drought conditions return to California faster today than in the past, increasing the fire risk.”

“Even though there is data on thousands of historical fires … it is of insufficient quantity and resolution to reliably determine the frequency of fires”

Mark Bove

Munich Reinsurance America

While he believes there is still a degree of uncertainty as to whether the frequency and severity of wildfires in North America has actually changed over the past few decades, there is no doubt that exposure levels are increasing and will continue to do so.

“The risk of a wildfire impacting a densely populated area has increased dramatically,” states Bove. “Most of the increase in wildfire risk comes from socioeconomic factors, like the continued development of residential communities along the wildland-urban interface and the increasing value and quantity of both real estate and personal property.”

Breaches in the Data

Yet while the threat of wildfire is increasing, the ability to accurately quantify that increased exposure potential is limited by a lack of granular historical data, both on a countrywide basis and even in highly exposed fire regions such as California, to accurately determine the probability of an event occurring.

“Even though there is data on thousands of historical fires over the past half-century,” says Bove, “it is of insufficient quantity and resolution to reliably determine the frequency of fires at all locations across the U.S.

“This is particularly true in states and regions where wildfires are less common, but still holds true in high-risk states like California,” he continues. “This lack of data, as well as the fact that the wildfire risk can be dramatically different on the opposite ends of a city, postcode or even a single street, makes it difficult to determine risk-adequate rates.”

According to Max Moritz, Cooperative Extension specialist in fire at the University of California, current approaches to fire mapping and modeling are also based too much on fire-specific data.

“A lot of the risk data we have comes from a bottom-up view of the fire risk itself. Methodologies are usually based on the Rothermel Fire Spread equation, which looks at spread rates, flame length, heat release, et cetera. But often we’re ignoring critical data such as wind patterns, ignition loads, vulnerability characteristics, spatial relationships, as well as longer-term climate patterns, the length of the fire season and the emergence of fire-weather corridors.”

Ground-level data is also lacking, he believes. “Without very localized data you’re not factoring in things like the unique landscape characteristics of particular areas that can make them less prone to fire risk even in high-risk areas.”

Further, data on mitigation measures at the individual community and property level is in short supply. “Currently, (re)insurers commonly receive data around the construction, occupancy and age of a given risk,” explains Bove, “information that is critical for the assessment of a wind or earthquake risk.”

However, the information needed to properly assess wildfire risk is typically not captured. For example, whether roof covering or siding is combustible. Bove says it is important to know if soffits and vents are open-air or protected by a metal covering, for instance. “Information about a home’s upkeep and surrounding environment is critical as well,” he adds.

At Ground Level

While wildfire may not be as data intensive as a peril such as flood, it is almost as demanding, especially on computational capacity. It requires simulating stochastic or scenario events all the way from ignition through to spread, creating realistic footprints that can capture what the risk is and the physical mechanisms that contribute to its spread into populated environments.

The RMS®North America Wildfire HD Model capitalize on this expanded computational capacity and improved data sets to bring probabilistic capabilities to bear on the peril for the first time across the entirety of the contiguous U.S. and Canada.

Using a high-resolution simulation grid, the model provides a clear understanding of factors such as the vegetation levels, the density of buildings, the vulnerability of individual structures and the extent of defensible space. The model also utilizes weather data based on re-analysis of historical weather observations to create a distribution of conditions from which to simulate stochastic years. That means that for a given location, the model can generate a weather time series that includes wind speed and direction, temperature, moisture levels, et cetera.

As wildfire risk is set to increase in frequency and severity due to a number of factors ranging from climate change to expansions of the wildland-urban interface caused by urban development in fire-prone areas, the industry now has to be able to live with that and understand how it alters the risk landscape.

On the Wind

Embers have long been recognized as a key factor in fire spread, either advancing the main burn or igniting spot fires some distance from the originating source. Yet despite this, current wildfire models do not effectively factor in ember travel, according to Max Moritz, from the University of California.

“Post-fire studies show that the vast majority of buildings in the U.S. burn from the inside out due to embers entering the property through exposed vents and other entry points,” he says. “However, most of the fire spread models available today struggle to precisely recreate the fire parameters and are ineffective at modeling ember travel.”

During the Tubbs Fire, the most destructive wildfire event in California’s history, embers carried on extreme ‘Diablo’ winds sparked ignitions up to two kilometers from the flame front. The rapid transport of embers not only created a more fast-moving fire, with Tubbs covering some 30 to 40 kilometers within hours of initial ignition, but also sparked devastating ignitions in areas believed to be at zero risk of fire, such as Coffey Park, Santa Rosa. This highly built-up area experienced an urban conflagration due to ember-fueled ignitions.

“Embers can fly long distances and ignite fires far away from its source,” explains Markus Steuer, consultant, corporate underwriting at Munich Re. “In the case of the Tubbs Fire they jumped over a freeway and ignited the fire in Coffey Park, where more than 1,000 homes were destroyed. This spot fire was not connected to the main fire. In risk models or hazard maps this has to be considered. Firebrands can fly over natural or man-made fire breaks and damage can occur at some distance away from the densely vegetated areas.”

For the first time, the RMS North America Wildfire HD Model enables the explicit simulation of ember transport and accumulation, allowing users to detail the impact of embers beyond the fire perimeters. The simulation capabilities extend beyond the traditional fuel-based fire simulations, and enable users to capture the extent to which large accumulations of firebrands and embers can be lofted beyond the perimeters of the fire itself and spark ignitions in dense residential and commercial areas.

As was shown in the Tubbs Fire, areas not previously considered at threat of wildfire were exposed by the ember transport. The introduction of ember simulation capability allows the industry to quantify the complete wildfire risk appropriately across North America wildfire portfolios.