As RMS launches Version 17 of its North America Earthquake Models, EXPOSURE looks at the developments leading to the update and how distilling immense stores of high-resolution seismic data into the industry’s most comprehensive earthquake models will empower firms to make better business decisions.

The launch of RMS’ latest North America Earthquake Models marks a major step forward in the industry’s ability to accurately analyze and assess the impacts of these catastrophic events, enabling firms to write risk with greater confidence due to the underpinning of its rigorous science and engineering.

The value of the models to firms seeking new ways to differentiate and diversify their portfolios as well as price risk more accurately, comes from a host of data and scientific updates. These include the incorporation of seismic source data from the U.S. Geological Survey (USGS) 2014 National Seismic Hazard Mapping Project.

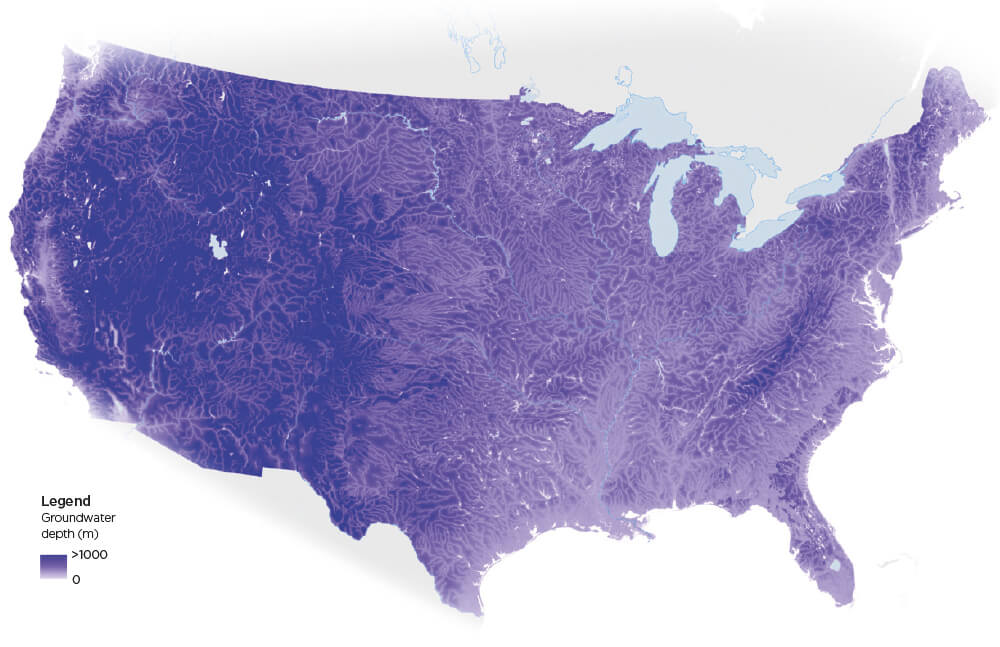

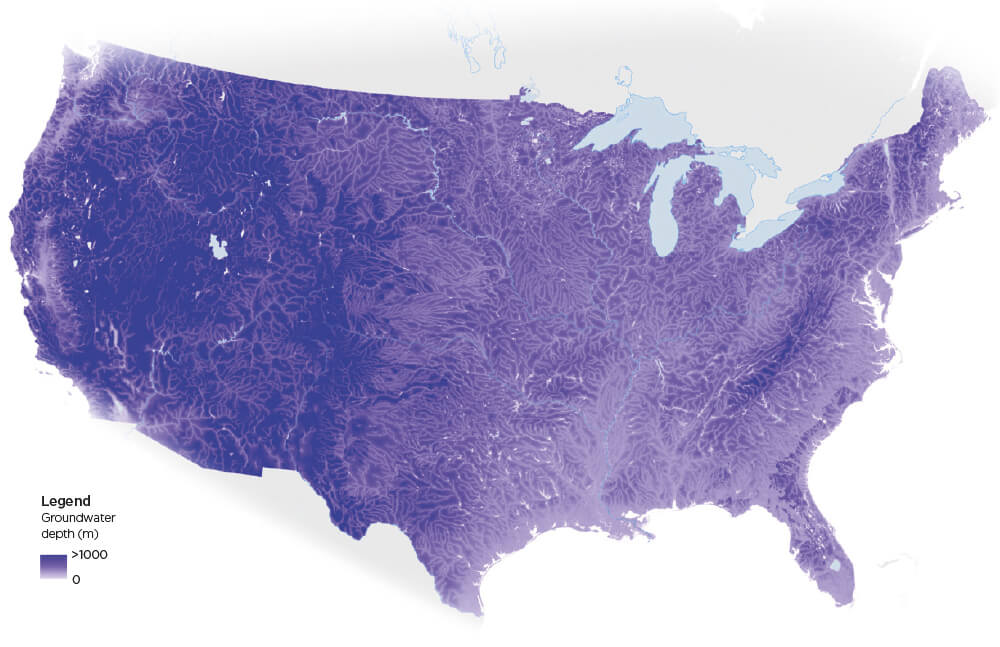

First groundwater map for Liquefaction

“Our goal was to provide clients with a seamless view of seismic hazards across the U.S., Canada and Mexico that encapsulates the latest data and scientific thinking— and we’ve achieved that and more,” explains Renee Lee, head of earthquake model and data product management at RMS.

“There have been multiple developments – research and event-driven – which have significantly enhanced understanding of earthquake hazards. It was therefore critical to factor these into our models to give our clients better precision and improved confidence in their pricing and underwriting decisions, and to meet the regulatory requirements that models must reflect the latest scientific understanding of seismic hazard.”

Founded on Collaboration

Since the last RMS model update in 2009, the industry has witnessed the two largest seismic-related loss events in history – the New Zealand Canterbury Earthquake Sequence (2010-2011) and the Tohoku Earthquake (2011).

“We worked very closely with the local markets in each of these affected regions,” adds Lee, “collaborating with engineers and the scientific community, as well as sifting through billions of dollars of claims data, in an effort not only to understand the seismic behavior of these events, but also their direct impact on the industry itself.”

A key learning from this work was the impact of catastrophic liquefaction. “We analyzed billions of dollars of claims data and reports to understand this phenomenon both in terms of the extent and severity of liquefaction and the different modes of failure caused to buildings,” says Justin Moresco, senior model product manager at RMS. “That insight enabled us to develop a high-resolution approach to model liquefaction that we have been able to introduce into our new North America Earthquake Models.”

An important observation from the Canterbury Earthquake Sequence was the severity of liquefaction which varied over short distances. Two buildings, nearly side-by-side in some cases, experienced significantly different levels of hazard because of shifting geotechnical features. “Our more developed approach to modeling liquefaction captures this variation, but it’s just one of the areas where the new models can differentiate risk at a higher resolution,” said Moresco. The updated models also do a better job of capturing where soft soils are located, which is essential for predicting the hot spots of amplified earthquake shaking.”

“There is no doubt that RMS embeds more scientific data into its models than any other commercial risk modeler,” Lee continues. “Throughout this development process, for example, we met regularly with USGS developers, having active discussions about the scientific decisions being made. In fact, our model development lead is on the agency’s National Seismic Hazard and Risk Assessment Steering Committee, while two members of our team are authors associated with the NGA-West 2 ground motion prediction equations.”

The North America Earthquake Models in Numbers

360,000

Number of fault sources included in the UCERF3, the USGS California seismic source model

>3,800

Number of unique U.S. vulnerability functions in RMS’ 2017 North America Earthquake Models for building shake coverage, with the ability to further differentiate risk based on 21 secondary building characteristics

>30

Size of team at RMS that worked on updating the latest model

Distilling the Data

While data is the foundation of all models, the challenge is to distil it down to its most business-critical form to give it value to clients. “We are dealing with data sets spanning millions of events,” explains Lee, “for example, UCERF3 — the USGS California seismic source model — alone incorporates more than 360,000 fault sources. So, you have to condense that immense amount of data in such a way that it remains robust but our clients can run it within ‘business hours’.”

Since the release of the USGS data in 2014, RMS has had over 30 scientists and engineers working on how to take data generated by a super computer once every five to six years and apply it to a model that enables clients to use it dynamically to support their risk assessment in a systematic way.

“You need to grasp the complexities within the USGS model and how the data has evolved,” says Mohsen Rahnama, chief risk modeling officer and general manager of the RMS models and data business. “In the previous California seismic source model, for example, the USGS used 480 logic tree branches, while this time they use 1,440 logic trees. You can’t simply implement the data – you have to understand it. How do these faults interact? How does it impact ground motion attenuation? How can I model the risk systematically?”

As part of this process, RMS maintained regular contact with USGS, keeping them informed of how they were implementing the data and what distillation had taken place to help validate their approach.

Building Confidence

Demonstrating its commitment to transparency, RMS also provides clients with access to its scientists and engineers to help them drill down in the changes into the model. Further, it is publishing comprehensive documentation on the methodologies and validation processes that underpin the new version.

Expanding the Functionality

Upgraded soil amplification methodology that empowers (re)insurers to enter a new era of high-resolution geotechnical hazard modeling, including the development of a Vs30 (average shear wave velocity in the top 30 meters at site) data layer spanning North America

Advanced ground motion models leveraging thousands of historical earthquake recordings to accurately predict the attenuation of shaking from source to site

New functionality enabling high and low representations of vulnerability and ground motion

3,800+ unique U.S. vulnerability functions for building shake coverage. Ability to further differentiate risk based on 21 secondary building characteristics

Latest modeling for very tall buildings (>40 stories) enables more accurate underwriting of high-value assets

New probabilistic liquefaction model leveraging data from the 2010-2011 Canterbury Earthquake Sequence in New Zealand

Ability to evaluate secondary perils: tsunami, fire following earthquake and earthquake sprinkler leakage

New risk calculation functionality based on an event set includes induced seismicity

Updated basin model for Seattle, Mississippi Embayment, Mexico City and Los Angeles. Added a new basin model for Vancouver

Latest historical earthquake catalog from the Geological Survey of Canada integrated, plus latest research data on the Mexico Subduction Zone

Seismic source data from the U.S. Geological Survey (USGS) 2014 National Seismic Hazard Mapping Project incorporated, which includes the third Uniform California Earthquake Rupture Forecast (UCERF3)

Updated Alaska and Hawaii hazard model, which was not updated by USGS