EXPOSURE delves into the algorithmic depths of machine learning to better understand the data potential that it offers the insurance industry.

Machine learning is similar to how you teach a child to differentiate between similar animals,” explains Peter Hahn, head of predictive analytics at Zurich North America. “Instead of telling them the specific differences, we show them numerous different pictures of the animals, which are clearly tagged, again and again. Over time, they intuitively form a pattern recognition that allows them to tell a tiger from, say, a leopard. You can’t predefine a set of rules to categorize every animal, but through pattern recognition you learn what the differences are.”

In fact, pattern recognition is already part of how underwriters assess a risk, he continues. “Let’s say an underwriter is evaluating a company’s commercial auto exposures. Their decision-making process will obviously involve traditional, codified analytical processes, but it will also include sophisticated pattern recognition based on their experiences of similar companies operating in similar fields with similar constraints. They essentially know what this type of risk ‘looks like’ intuitively.”

Tapping the Stream

At its core, machine learning is then a mechanism to help us make better sense of data, and to learn from that data on an ongoing basis. Given the data-intrinsic nature of the industry, the potential it affords to support insurance endeavors is considerable.

“If you look at models, data is the fuel that powers them all,” says Christos Mitas, vice president of model development at RMS. “We are now operating in a world where that data is expanding exponentially, and machine learning is one tool that will help us to harness that.”

One area in which Mitas and his team have been looking at machine learning is in the field of cyber risk modeling. “Where it can play an important role here is in helping us tackle the complexity of this risk. Being able to collect and digest more effectively the immense volumes of data which have been harvested from numerous online sources and datasets will yield a significant advantage.”

“MACHINE LEARNING CAN HELP US GREATLY EXPAND THE NUMBER OF EXPLANATORY VARIABLES WE MIGHT INCLUDE TO ADDRESS A PARTICULAR QUESTION”

CHRISTOS MITAS

RMS

He also sees it having a positive impact from an image processing perspective. “With developments in machine learning, for example, we might be able to introduce new data sources into our processing capabilities and make it a faster and more automated data management process to access images in the aftermath of a disaster. Further, we might be able to apply machine learning algorithms to analyze building damage post event to support speedier loss assessment processes.”

“Advances in natural language processing could also help tremendously in claims processing and exposure management,” he adds, “where you have to consume reams of reports, images and facts rather than structured data. That is where algorithms can really deliver a different scale of potential solutions.”

At the underwriting coalface, Hahn believes a clear area where machine learning can be leveraged is in the assessment and quantification of risks. “In this process, we are looking at thousands of data elements to see which of these will give us a read on the risk quality of the potential insured. Analyzing that data based on manual processes, given the breadth and volume, is extremely difficult.”

Looking Behind the Numbers

Mitas is, however, highly conscious of the need to establish how machine learning fits into the existing insurance eco-system before trying to move too far ahead. “The technology is part of our evolution and offers us a new tool to support our endeavors. However, where our process as risk modelers starts is with a fundamental understanding of the scientific principles which underpin what we do.”

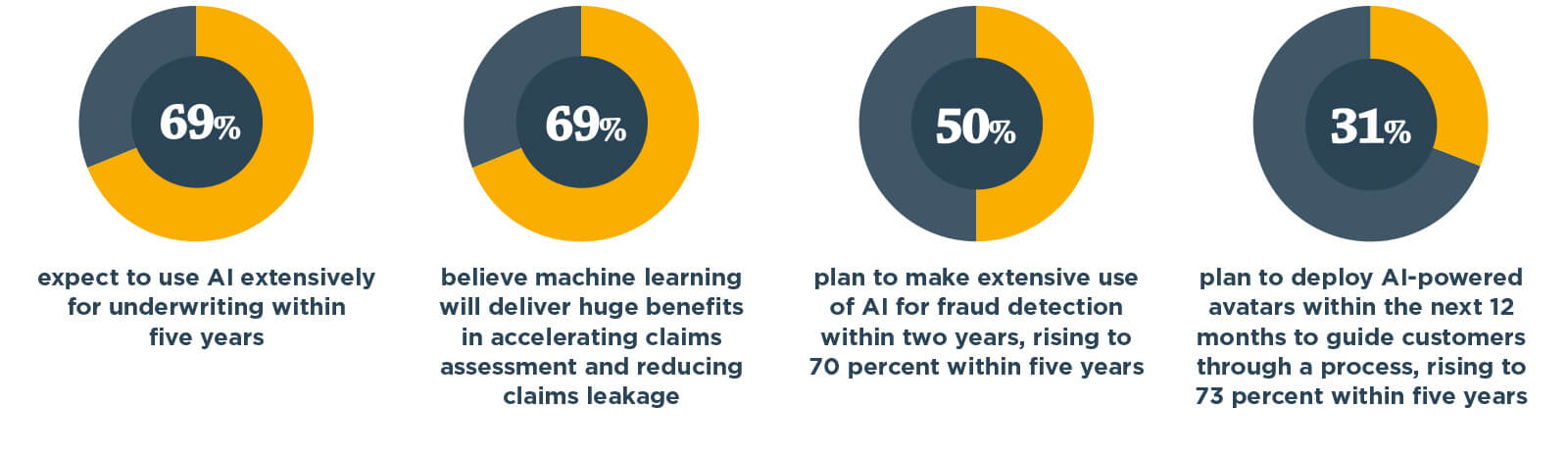

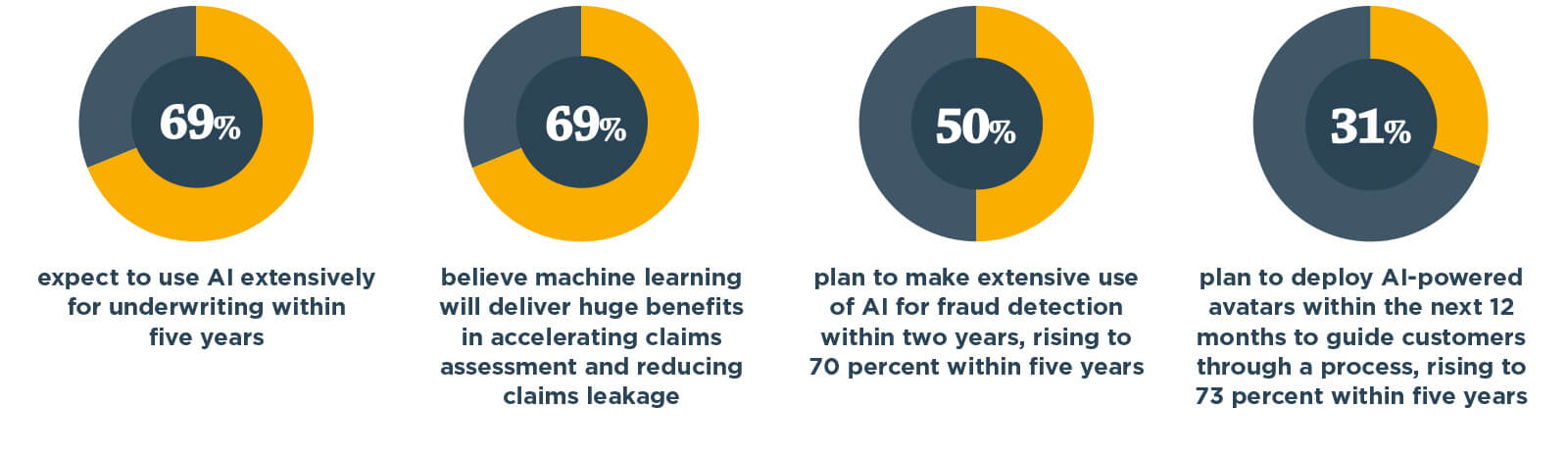

Making the Investment

Source: The Future of General Insurance Report based on research conducted by Marketforce Business Media and the UK’s Chartered Insurance Institute in August and September 2016 involving 843 senior figures from across the UK insurance sector

“It is true that machine learning can help us greatly expand the number of explanatory variables we might include to address a particular question, for example – but that does not necessarily mean that the answer will more easily emerge. What is more important is to fully grasp the dynamics of the process that led to the generation of the data in the first place.”

He continues: “If you look at how a model is constructed, for example, you will have multiple different model components all coupled together in a highly nonlinear, complex system. Unless you understand these underlying structures and how they interconnect, it can be extremely difficult to derive real insight from just observing the resulting data.”

“WE NEED TO ENSURE THAT WE CAN EXPLAIN THE RATIONALE BEHIND THE CONCLUSIONS”

PETER HAHN

ZURICH NORTH AMERICA

Hahn also highlights the potential ‘black box’ issue that can surround the use of machine learning. “End users of analytics want to know what drove the output,” he explains, “and when dealing with algorithms that is not always easy. If, for example, we apply specific machine learning techniques to a particular risk and conclude that it is a poor risk, any experienced underwriter is immediately going to ask how you came to that conclusion. You can’t simply say you are confident in your algorithms.”

“We need to ensure that we can explain the rationale behind the conclusions that we reach,” he continues. “That can be an ongoing challenge with some machine learning techniques.”

There is no doubt that machine learning has a part to play in the ongoing evolution of the insurance industry. But as with any evolving technology, how it will be used, where and how extensively will be influenced by a multitude of factors.

“Machine learning has a very broad scope of potential,” concludes Hahn, “but of course we will only see this develop over time as people become more comfortable with the techniques and become better at applying the technology to different parts of their business.”